Your AI model says a patient has a disease — but they don’t. Or it says they don’t — but they do. Both are errors, but they are very different kinds of errors. Understanding True Positive, False Positive, True Negative, and False Negative is the key to reading a confusion matrix correctly — and it is directly tested in CBSE Class 10 AI Unit 3: Evaluating Models.

What You’ll Learn

- What TP, FP, TN, and FN mean in plain English

- How to identify each type of outcome from any example

- How these four values connect to accuracy, precision, and recall

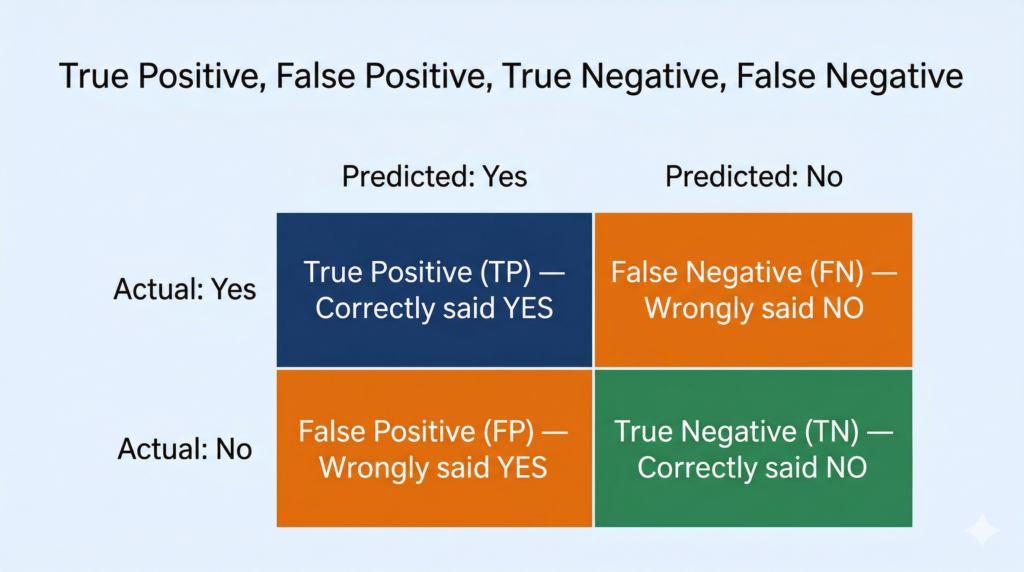

The Core Idea: Four Things a Classifier Can Do

Every AI classification model looks at an input and makes a yes/no prediction. For example: “Does this email contain spam? Yes or No.” “Does this X-ray show disease? Yes or No.”

When you compare the model’s prediction to the actual correct answer, exactly one of four things happens. Let’s use a spam email detector as our running example — simple, relatable, and easy to follow.

True Positive (TP) — Correctly Predicted YES

The model said YES. The actual answer is YES.

Spam example: The email IS spam. The model correctly flagged it as spam. ✅

This is the ideal outcome when the answer is “yes”. The model got it right.

Exam tip: “True” = the model’s prediction is correct. “Positive” = the model predicted the positive class (yes/spam/disease present).

True Negative (TN) — Correctly Predicted NO

The model said NO. The actual answer is NO.

Spam example: The email is NOT spam. The model correctly said it is not spam. ✅

Another ideal outcome — this time for the “no” case. The model correctly identified a legitimate email as safe.

Exam tip: “True” = correct prediction. “Negative” = predicted the negative class (no/not spam/disease absent).

False Positive (FP) — Wrongly Predicted YES

The model said YES. But the actual answer is NO.

Spam example: A completely normal email from your friend. The model incorrectly flagged it as spam. ❌

This is also called a Type I Error. The model made a “positive” prediction, but it was false — it was wrong.

Real-world consequence: Your important email about exam results lands in the spam folder. Annoying, but not catastrophic.

False Negative (FN) — Wrongly Predicted NO

The model said NO. But the actual answer is YES.

Spam example: A phishing scam email (actual spam) that the model missed and let through to your inbox. ❌

This is also called a Type II Error. The model made a “negative” prediction, but it was false — the actual answer was yes.

Real-world consequence: The scam email reaches you. Potentially dangerous.

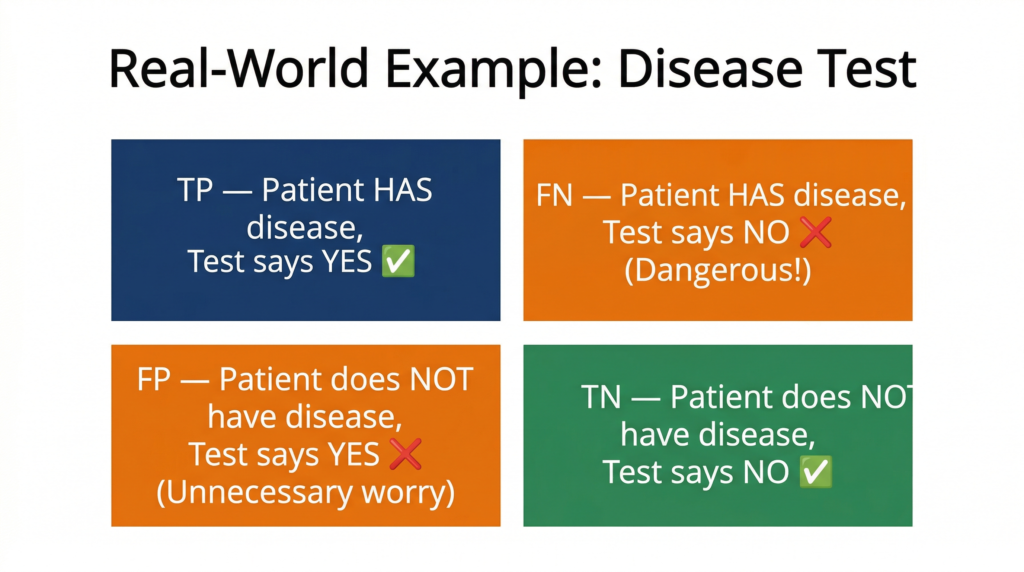

A Second Example: Disease Detection

Medical AI is a domain where these four terms matter enormously — and it is the type of example that appears frequently in CBSE exams.

Imagine an AI system that analyses blood test results and predicts whether a patient has diabetes.

| Outcome | What Happened | Label |

|---|---|---|

| Patient has diabetes. Model says YES. | Correct — caught the disease | TP ✅ |

| Patient has diabetes. Model says NO. | Missed a sick patient — dangerous | FN ❌ |

| Patient does NOT have diabetes. Model says YES. | Unnecessary alarm, more tests needed | FP ❌ |

| Patient does NOT have diabetes. Model says NO. | Correct — healthy patient confirmed | TN ✅ |

In this scenario, a False Negative is far more dangerous than a False Positive. Missing a diabetic patient means they go untreated. An unnecessary positive result means one extra test — inconvenient, but manageable.

This is exactly why you cannot judge a model on accuracy alone — a model that says “no disease” for everyone will have high accuracy if most people are healthy, but its False Negative count will be catastrophically high.

The Memory Trick — “T/F = Was the model right or wrong? P/N = What did it predict?”

Break every term into two parts:

- First word (True / False): Was the model correct (True) or incorrect (False)?

- Second word (Positive / Negative): What did the model predict — Yes (Positive) or No (Negative)?

| Term | Model Correct? | Model Predicted |

|---|---|---|

| True Positive | ✅ Yes | Positive (Yes) |

| True Negative | ✅ Yes | Negative (No) |

| False Positive | ❌ No | Positive (Yes) |

| False Negative | ❌ No | Negative (No) |

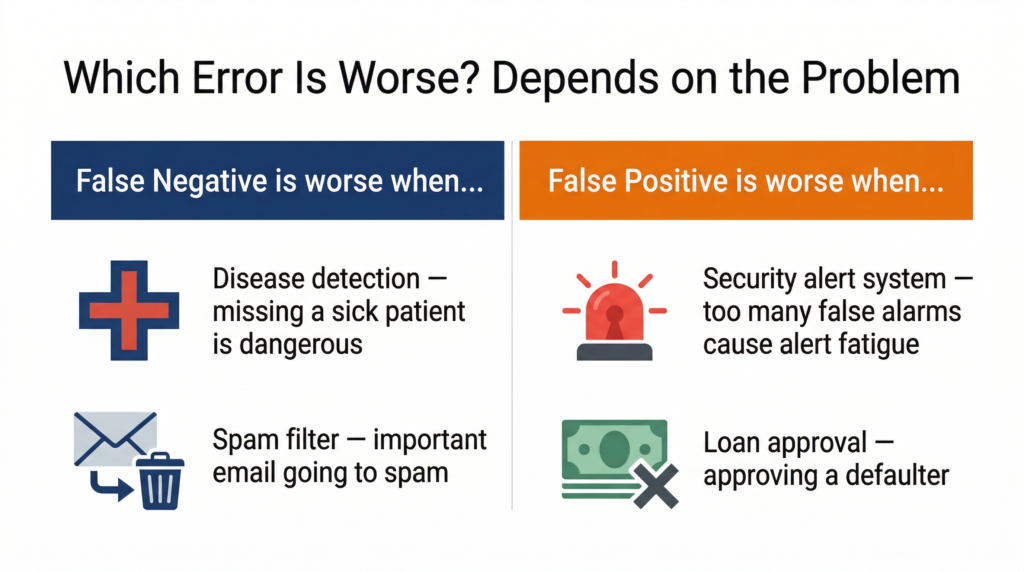

Which Error Is Worse? It Depends on the Problem

There is no universal answer. The cost of each error depends entirely on the context.

When False Negatives are more dangerous: In medical diagnosis (missing a disease is worse than a false alarm), fire detection systems (missing a fire is worse than a false alert), or fraud detection (missing actual fraud is worse than reviewing a legitimate transaction).

When False Positives are more dangerous: In legal systems (incorrectly accusing an innocent person), loan approval (approving a customer who will default), or security checkpoint systems (too many false alarms cause alert fatigue and reduce trust).

This is the real-world connection your CBSE examiner is looking for. When asked to evaluate a model, don’t just report accuracy — explain which type of error matters more for the problem at hand.

📌 CBSE connection: CBSE Class 10 Unit 3 includes an activity “Decide the appropriate metric to evaluate the AI model” — this judgement about TP, FP, FN, TN is exactly the skill being tested.

Quick Revision Box

| Term | Short Definition |

|---|---|

| True Positive (TP) | Model predicted YES and the actual answer is YES |

| False Positive (FP) | Model predicted YES but the actual answer is NO (Type I Error) |

| True Negative (TN) | Model predicted NO and the actual answer is NO |

| False Negative (FN) | Model predicted NO but the actual answer is YES (Type II Error) |

| Type I Error | Another name for False Positive |

| Type II Error | Another name for False Negative |

Practice Questions

Q1 (2 marks): A hospital uses an AI model to detect cancer from X-ray images. The model incorrectly identifies a healthy patient as having cancer. What type of error is this? Explain why this type of error occurs.

Model Answer: This is a False Positive (FP) — the model predicted cancer (positive) but the actual answer was no cancer (so the prediction is false). A False Positive in this context means a healthy patient will undergo unnecessary further tests and experience significant anxiety. While not as dangerous as a False Negative (missing actual cancer), it is still a costly error that affects patient wellbeing and wastes medical resources.

Q2 (MCQ): An AI spam filter lets a phishing scam email pass through to the user’s inbox without flagging it. This outcome is best described as:

a) True Positive b) True Negative c) False Positive d) False Negative ✅

Explanation: The email IS spam (actual = yes/positive), but the model said it is NOT spam (predicted = no/negative). The prediction is wrong (False) and it predicted Negative → False Negative.

FAQ

Q1: I keep mixing up False Positive and False Negative. Is there a better way to remember them?

Try this: focus only on the second word to remember what the model predicted. “Positive” means the model said YES. “Negative” means the model said NO. Then the first word tells you if it was right: “True” = correct, “False” = wrong. So “False Positive” = model said YES but was wrong. “False Negative” = model said NO but was wrong. Build a quick example in your head each time to confirm.

Q2: If a model has zero False Positives, does that mean it is a good model?

Not necessarily. A model can have zero False Positives by predicting “No” for everything — then it never wrongly says “Yes,” but it also never correctly catches any positive case. Its False Negative count would be very high. This is why you need to look at all four values together, not any single one in isolation.

Q3: Not in your syllabus but good to know — What is the ROC curve?

In advanced machine learning, data scientists plot something called a ROC (Receiver Operating Characteristic) curve, which shows how a model’s True Positive rate and False Positive rate change as you adjust its decision threshold. It helps compare multiple models on the same problem. You will encounter this in Class 12 and beyond — for now, understanding TP, FP, TN, FN at the confusion matrix level is exactly what you need.