Every CBSE AI examiner knows which students actually think about AI ethics — and which ones recited from a guide. Here is how to be the first kind.

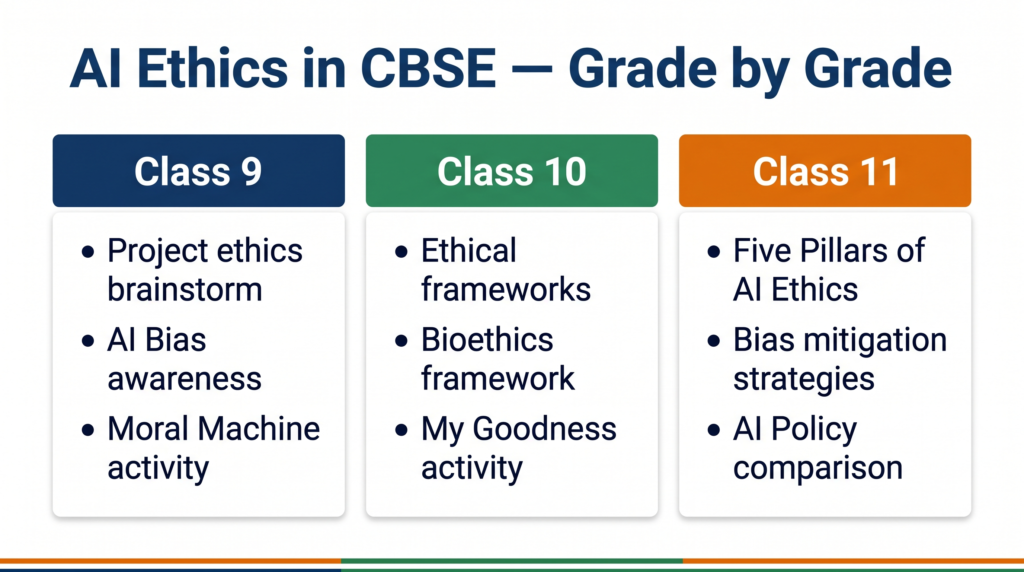

AI ethics is not a soft topic you can bluff through. In Class 9 and 10, you are expected to identify ethical issues in AI projects you design yourself. In Class 11, you are assessed on five specific pillars, bias mitigation strategies, and comparative AI policy analysis — a full Unit 8 with 4 theory marks and 4 practical marks on the line. This guide covers everything across all three grades, in the depth your examiner expects.

What You Will Learn

- The ethical questions your CBSE AI project must address — and exactly where to document them

- Why the Five Pillars of AI Ethics are the single most testable framework in Class 11 Unit 8

- How AI bias works, where it comes from, and the strategies you need to know to mitigate it

- What ethical frameworks mean for Class 10 — and how Bioethics applies to real AI systems in India

- The kind of answer that earns full marks in a viva on AI ethics versus one that earns half

What Is AI Ethics? A Starting Point for Class 9

Think about a system that decides whether a student gets a scholarship, whether a patient gets a hospital bed, or whether a loan gets approved. In all three cases, an AI system could be making the decision — analysing data and producing an outcome that affects a real person’s life.

AI ethics is the field that asks: is this decision fair? Is it transparent? Who is responsible if it goes wrong? Can the person affected understand and challenge it?

These are not abstract philosophical questions. They are the practical design constraints that every responsible AI project must address — including yours.

📌 Class 9 — Your Entry Point: In Class 9, you encounter AI ethics during the Project Cycle unit. When you scope your problem using the 4Ws canvas, you are expected to brainstorm the ethical issues involved in the problem you have chosen. This is a listed learning outcome in the CBSE 2025-26 syllabus. Write it down in your project documentation — it shows your examiner you understood the full scope of your project.

Ethical Frameworks of AI — What Class 10 Students Need to Know

An ethical framework is a structured set of principles for deciding whether an action or system is morally acceptable. In the context of AI, ethical frameworks help developers, policymakers, and users make decisions about how AI should behave — especially when different values are in tension.

The CBSE Class 10 curriculum (Unit 1: Revisiting AI Project Cycle and Ethical Frameworks for AI) introduces the concept of ethical frameworks and explores their types.

Types of Ethical Frameworks

There are several major traditions in ethical thinking that apply to AI:

Consequentialist frameworks evaluate actions by their outcomes. An AI system is ethical if the total benefit it produces outweighs the harm — across all people affected. This framework is commonly used in public health AI, where trade-offs must be made across large populations.

Deontological frameworks focus on rules and duties rather than outcomes. An action is ethical if it follows a set of correct rules, regardless of the result. This is relevant when designing AI systems that must always tell the truth or always seek informed consent — even when lying or skipping consent might produce a better outcome in one particular case.

Virtue ethics frameworks ask what a person of good character would do. Applied to AI, this asks whether the team building the system is acting with integrity, care, and responsibility — not just whether the algorithm produces a correct output.

Bioethics is a specific ethical framework developed in the context of medicine and healthcare. It is built on four principles: autonomy (the patient’s right to make informed decisions), beneficence (acting in the patient’s best interest), non-maleficence (do no harm), and justice (fair distribution of healthcare resources). Because AI is increasingly used in healthcare — diagnostic tools, triage systems, treatment recommendations — Bioethics has become one of the most relevant AI ethical frameworks in practice.

📌 Class 10 — Exam Focus: CBSE Class 10 Unit 1 is worth 7 marks in theory. Questions on ethical frameworks typically ask you to: (a) define an ethical framework and explain why it is needed for AI, (b) describe the Bioethics framework and name its four principles, or (c) apply an ethical framework to a given case study scenario. Knowing the names and definitions of at least two frameworks — with Bioethics as your anchor — is sufficient for full marks.

India Context: Bioethics in Action

The National Digital Health Mission (ABDM) uses AI to link patient records, diagnostic reports, and hospital systems across India. Every time an AI system recommends a treatment or flags a high-risk patient, questions of autonomy (did the patient consent to their data being used?), beneficence (does this recommendation help the patient?), non-maleficence (could the AI be wrong in a harmful way?), and justice (does the system work equally well for urban and rural patients, across languages and income levels?) are all directly at stake. The Bioethics framework exists precisely to navigate decisions like these.

The Five Pillars of AI Ethics — Class 11 Core Content

📌 Class 11 — Unit 8: AI Ethics and Values (4 theory marks + 4 practical marks)

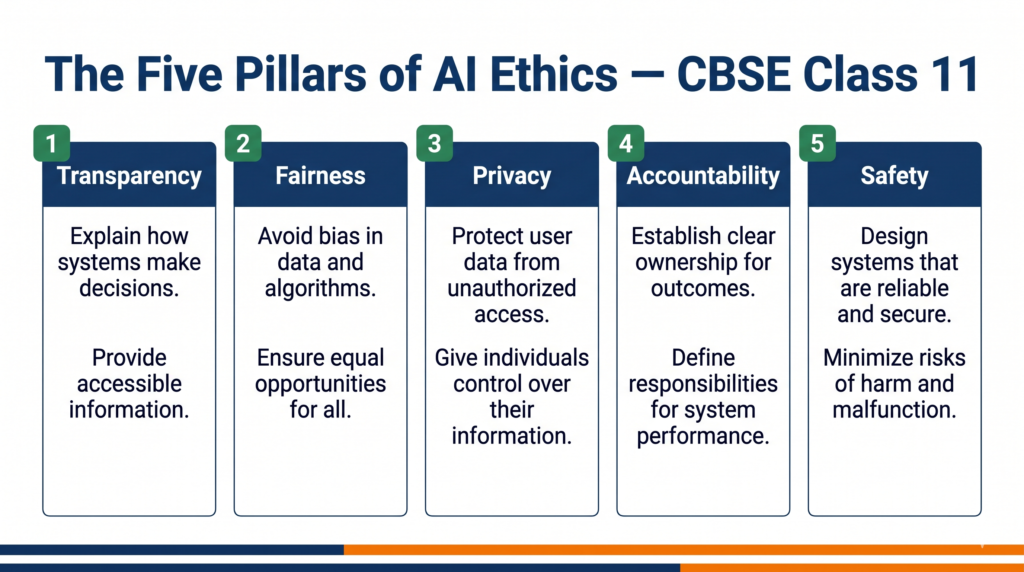

The CBSE Class 11 curriculum names five specific pillars of AI ethics. These are the central exam topic of Unit 8. You are expected to demonstrate understanding of each pillar and its ethical implications.

Pillar 1: Transparency

Transparency means that AI systems should be explainable — people affected by AI decisions should be able to understand, at least in general terms, why the system produced a particular output.

An AI system that approves or rejects a credit card application without any explanation is a transparency failure. The person denied credit has no way to know whether the decision was based on valid financial data or on a biased pattern in the training set. In India, UIDAI’s Aadhaar authentication system has faced transparency questions: when a biometric match fails, the citizen typically receives no explanation of why — only a rejection. Transparency requires building systems that can account for their decisions.

Pillar 2: Justice and Fairness

A fair AI system produces outcomes that are equitable across different groups — it does not systematically disadvantage people based on gender, caste, religion, language, disability, or any other protected characteristic.

Fairness in AI is technically difficult because the data used to train AI systems often reflects historical inequalities. A hiring model trained on past recruitment data from a company that historically hired fewer women will replicate that pattern — unless the system is deliberately designed to correct for it.

In India, Bhashini — the government’s language AI initiative — has had to confront fairness across 22 scheduled languages and hundreds of dialects. An NLP model that works well in Hindi and English but fails in Santali or Manipuri is not a fair system, even if no one designed it to be unfair.

Pillar 3: Privacy

Privacy means that AI systems must collect, store, and use personal data only with the knowledge and meaningful consent of the individuals involved — and must protect that data from misuse.

AI systems are data-hungry. A recommendation engine needs browsing history. A health diagnostic needs medical records. A facial recognition system needs images. In each case, the question is: did the person agree to this? Do they understand how their data is being used? Can they opt out?

In India, the Digital Personal Data Protection Act 2023 (DPDPA) establishes legal obligations around consent and data use. Understanding this is part of understanding AI ethics — law and ethics are not the same thing, but in this case they overlap directly.

Pillar 4: Accountability

Accountability means that when an AI system causes harm, there must be a clear answer to the question: who is responsible?

This is harder than it sounds. AI systems involve data providers, algorithm designers, model trainers, product developers, deployment teams, and end users. When a self-driving car injures a pedestrian, or a medical AI misdiagnoses a patient, or a credit-scoring model unfairly denies a loan — who bears responsibility? Accountability as an ethical pillar requires building AI systems with clear ownership, audit trails, and redress mechanisms from the start.

Pillar 5: Safety and Reliability

An AI system is safe if it behaves predictably and correctly under normal conditions — and fails gracefully rather than dangerously under abnormal conditions. Reliability means the system performs consistently across the full range of users and contexts it is deployed in.

An AI system used in air traffic control, medical diagnosis, or structural engineering must meet extremely high safety standards. Errors in these contexts can cost lives. Safety as an ethical pillar requires rigorous testing, adversarial evaluation, and conservative deployment — never releasing a system before its failure modes are understood.

Understanding AI Bias — Sources and Awareness

Bias in AI is not the same as a coding error. It is a systematic distortion in the output of an AI system — a pattern where certain groups or outcomes are consistently treated differently from others.

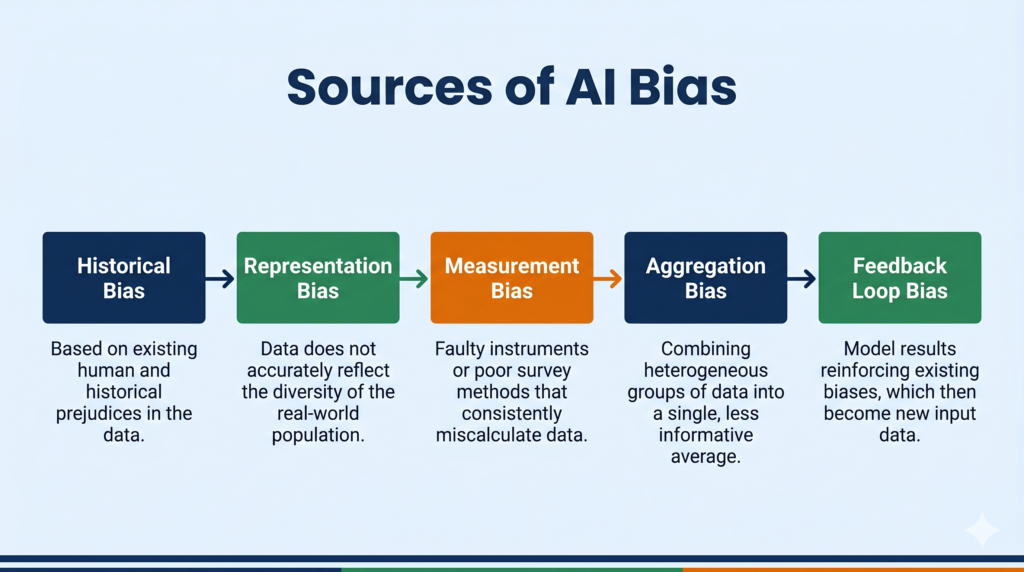

Where Does Bias Come From?

Historical bias arises when training data reflects past discrimination. A resume-screening AI trained on past hiring decisions will replicate past hiring biases — including gender or caste-based patterns — unless deliberately corrected.

Representation bias arises when certain groups are underrepresented in training data. Facial recognition systems have been shown to perform significantly worse on darker skin tones because training datasets contained a disproportionate number of lighter-skinned faces. A system trained mostly on certain demographics fails the others.

Measurement bias arises when the features selected to represent a real-world concept are themselves imperfect proxies. Using a person’s postal code as a proxy for creditworthiness, for example, introduces bias because postal codes in India correlate with caste and income history — not just current financial behaviour.

Aggregation bias arises when a model trained on a general population is applied to a specific subgroup whose behaviour differs. A health risk model trained on general population data may give poor predictions for a specific regional or demographic group.

Feedback loop bias arises when a biased AI system produces outputs that become part of the next training dataset — reinforcing the original bias. Predictive policing systems that flag high-crime areas generate more policing activity in those areas, which generates more recorded crime, which reinforces the original flag.

📌 Class 11 Exam Note: You are expected to be able to: (a) define bias in AI, (b) name and explain at least two sources of bias, and (c) describe at least two strategies for mitigating bias. All three are explicit learning outcomes in Class 11 Unit 8.

Mitigating Bias in AI Systems — Strategies

Identifying bias is necessary. Knowing what to do about it is what Unit 8 actually tests.

Strategy 1: Diverse and representative data collection The most direct intervention is to ensure training data represents the full range of people and contexts the system will be applied to. This means active curation — not just using whatever data is easily available. For a facial recognition system intended for use across India, training data must include balanced representation across skin tones, regional facial structures, lighting conditions, and age groups.

Strategy 2: Bias auditing before deployment A bias audit evaluates a trained model for differential performance across demographic groups before it goes live. This involves running the model on test datasets that represent different subgroups and measuring whether error rates, false positives, and false negatives are consistent — or whether certain groups are disproportionately misclassified.

Strategy 3: Algorithmic fairness constraints Some machine learning frameworks allow fairness constraints to be built directly into the training objective. Instead of optimising only for overall accuracy, the model is required to also maintain equitable performance across defined groups. This is an active area of research in AI fairness.

Strategy 4: Human oversight in high-stakes decisions For decisions that significantly affect people’s lives — loan approvals, criminal sentencing, medical diagnosis, school admissions — AI outputs should be reviewed by a qualified human before action is taken. Automated decisions in high-stakes contexts, without human review, amplify whatever biases exist in the system.

Strategy 5: Ongoing monitoring and feedback A model that was fair at deployment can become biased over time as the world changes and the data it sees shifts. Continuous monitoring — tracking performance across demographic groups in production — is required to catch and correct emerging bias.

Developing AI Policies — What Class 11 Students Need to Know

The CBSE Class 11 curriculum includes a practical activity: a comparative study of AI policies established by different organisations and regulatory bodies. This is a graded practical file component.

An AI policy is a set of principles, guidelines, or rules that governs how AI is developed and used within an organisation or jurisdiction. Different bodies have published their own AI policies — and they differ in meaningful ways.

UNESCO’s Recommendation on the Ethics of AI (2021) was the first global normative instrument on AI ethics, adopted by 193 countries. It emphasises human dignity, environmental sustainability, and the right to privacy.

India’s National Strategy for Artificial Intelligence (NITI Aayog, 2018) focuses on AI for social and economic good — healthcare, agriculture, education, infrastructure — with an emphasis on inclusive AI that benefits all sections of society.

The European Union AI Act (2024) is the world’s first comprehensive AI regulation. It classifies AI systems by risk level (unacceptable, high, limited, minimal) and imposes legal obligations on developers and deployers based on risk classification.

IBM’s AI Ethics Principles include fairness, robustness, transparency, explainability, and privacy — and have been operationalised into internal review processes and open-source toolkits.

For your practical activity, the key analytical task is: how do these policies differ in their priorities, their enforcement mechanisms, and the values they place at the centre? There is no single correct policy — and recognising the genuine differences is the sophistication your examiner is looking for.

Key Differences: AI Ethics vs AI Law

| Dimension | AI Ethics | AI Law / Regulation |

|---|---|---|

| Nature | Principles and values — what AI should do | Rules and requirements — what AI must do |

| Enforcer | Professional community, public trust | Government, courts, regulatory bodies |

| Consequence of violation | Reputational harm, loss of trust | Legal penalty, fines, injunctions |

| Flexibility | Adaptable to context | Fixed until legislation is amended |

| India examples | NITI Aayog AI principles | DPDPA 2023, IT Act |

| Global examples | UNESCO AI Ethics Recommendation | EU AI Act |

Understanding that ethics and law are related but distinct is a mark of genuine comprehension — and a question that regularly appears in Class 11 AI viva examinations.

Real-World Applications — AI Ethics in India

Aadhaar Authentication and Privacy The Aadhaar system links biometric identity data to nearly every major government service in India. When the Supreme Court ruled in the Puttaswamy judgment (2017) that privacy is a fundamental right, it raised questions that are directly relevant to AI ethics: when can the state collect biometric data? Under what conditions? With what protections? The ethical pillar of privacy is not abstract here — it is constitutional.

UPI and Fraud Detection UPI processes billions of transactions monthly. Fraud detection on UPI uses machine learning to flag suspicious transactions in real time. The ethical questions are immediate: if the system flags a legitimate transaction, the user may be denied access to their money during an emergency. If it fails to flag a fraudulent one, they lose money. The trade-off between false positives and false negatives is simultaneously a technical problem and an ethical one — about who bears the cost of errors.

ISRO’s Satellite AI and Accountability ISRO uses AI in satellite image analysis, predictive modelling for natural disasters, and crop monitoring. These are life-affecting applications — flood warnings based on AI models, crop failure predictions that affect food security policy. Accountability is the critical pillar here: when a prediction is wrong, who reviews the model? Who is responsible for the consequence?

Zomato’s Algorithmic Pricing and Transparency Zomato uses dynamic pricing algorithms that adjust delivery fees, restaurant commissions, and order rankings in real time. Customers and restaurant partners often cannot tell why prices change or why a particular restaurant appears higher in search results. This is a transparency failure — and a commercial one, not just a philosophical one, because opacity in algorithmic pricing erodes trust.

Common Mistakes in Exam Answers on AI Ethics

Mistake 1: Defining ethics as “rules for behaviour” without connecting it to AI-specific consequences. The correct frame is: ethics in AI is about ensuring that AI systems — which can affect millions of people at scale — are designed and deployed in ways that respect human dignity, fairness, and accountability. The scale is what makes AI ethics a distinct field.

Mistake 2: Listing the Five Pillars without explaining the tension between them. In practice, the five pillars conflict. Full transparency can compromise privacy (you cannot fully explain a model’s decision without revealing information about the training data). Maximum safety can compromise fairness (an overly conservative safety threshold may disproportionately reject valid applications from under-represented groups). Recognising these tensions is what earns marks beyond rote memorisation.

Mistake 3: Treating bias as always intentional. Bias is usually not deliberate. This is what makes it dangerous — a well-meaning team building a genuinely useful system can produce a biased output because the training data reflected historical inequalities they did not notice or correct for.

Mistake 4: Confusing AI ethics with AI law. They overlap, but they are not the same. An AI system can be fully legal and still be deeply unethical. An AI system can violate a law even when the developers had ethical intentions. The distinction matters in exam answers.

Exam Strategy — How to Answer AI Ethics Questions

For a 2-mark question (e.g., “What is AI bias? Give one example.”): Write a one-sentence definition that mentions systematic distortion and differential treatment, then give one specific, named example — not a vague one. “Facial recognition systems that perform worse on darker skin tones due to underrepresentation in training data” scores full marks. “AI can be biased against some people” does not.

For a 4-mark question (e.g., “Explain any two pillars of AI ethics with examples.”): Name each pillar clearly, define it in one sentence, and give one specific example. Examiners credit: named pillar → correct definition → concrete example. Two pillars done this way scores 4/4. Four pillars done vaguely does not.

For a viva question (e.g., “How would you mitigate bias in your AI project?”): The answer examiners reward has three components: acknowledge that bias can exist even in well-intentioned projects, name a specific source of bias that is relevant to your project’s domain, and describe one concrete mitigation strategy you would apply. A student who says “I would collect more data” is giving a partial answer. A student who says “I would conduct a bias audit on my model’s performance across gender groups before deployment, because my training data may over-represent urban users” is giving a complete one.

What the marking scheme does not say — but examiners consistently reward — is when a student explains the conflict between ethical pillars. If you note in your answer that transparency can sometimes conflict with privacy, and you explain why, you are demonstrating depth that rote answers never achieve.

Try It Yourself — CBSE Activities

Activity 1: Moral Machine (Class 9 and Class 11)

The Moral Machine (moralmachine.net) presents you with a series of trolley-problem scenarios involving self-driving cars. In each scenario, a car with failed brakes must choose between hitting one group of people or another. You decide which group the car should save — then see how your choices compare with millions of people globally.

What to do:

- Visit moralmachine.net

- Complete at least 13 scenarios (one full session)

- Note your results: which factors did you prioritise — saving more lives, saving younger people, saving passengers over pedestrians, obeying traffic rules?

- Compare your results to the global average shown after the session

- Reflect: do your choices reflect a consequentialist, deontological, or virtue ethics approach?

For your practical file: Document your key results, your reflection on which framework your choices most closely matched, and one paragraph on what this exercise reveals about the difficulty of encoding ethics into AI systems.

Activity 2: Survival of the Best Fit (Class 11)

Survival of the Best Fit (survivalofthebestfit.com) is an interactive game that simulates what happens when a hiring algorithm is trained on biased data. You start by manually reviewing CVs to hire candidates. The AI learns from your selections. Then it takes over — and you watch it replicate the biases in your original choices.

What to do:

- Play through the full game (approximately 10–15 minutes)

- Observe which candidates the AI starts to systematically favour or reject

- Identify which source of bias the game is demonstrating (representation bias or historical bias)

- Note one strategy from the “Mitigating Bias” section of this guide that would have corrected the AI’s behaviour

For your practical file: Write a 150-word summary of what happened in the game, what type of bias it demonstrated, and what mitigation strategy would apply. This directly maps to the “Activity: Role Play on biased AI systems” practical in Class 11 Unit 8.

Activity 3: My Goodness (Class 10)

My Goodness (my-goodness.net) is a platform that presents ethical dilemmas and asks you to make decisions, then reveals how other users responded globally.

What to do:

- Visit my-goodness.net and select a scenario

- Make your decision and record your reasoning

- Compare your response to the global results

- Identify which ethical framework (consequentialist, deontological, virtue ethics) your reasoning most closely followed

For your practical file: Document your scenario, your decision, your reasoning, and your reflection on which ethical framework you applied. This maps directly to the Class 10 Unit 1 My Goodness activity.

Quick Revision Box

| Term | Definition |

|---|---|

| AI Ethics | The field that examines the moral implications of how AI systems are designed, deployed, and governed |

| Ethical Framework | A structured set of principles for evaluating whether an action or system is morally acceptable |

| Bioethics | An ethical framework built on autonomy, beneficence, non-maleficence, and justice — developed in healthcare, now applied to health AI |

| Transparency | The principle that AI systems should be explainable to those affected by their decisions |

| Fairness/Justice | The principle that AI systems should produce equitable outcomes across different demographic groups |

| Privacy | The principle that personal data should be collected and used only with meaningful consent and adequate protection |

| Accountability | The principle that when an AI system causes harm, clear responsibility must be assigned |

| Safety and Reliability | The principle that AI systems must behave predictably and fail gracefully rather than dangerously |

| AI Bias | A systematic distortion in AI outputs that causes certain groups or outcomes to be treated unfairly |

| Historical Bias | Bias that enters a model because the training data reflects past discrimination |

| Representation Bias | Bias that enters a model because certain groups are underrepresented in training data |

| Bias Audit | An evaluation of a trained model to check for differential performance across demographic groups |

| AI Policy | A formal set of guidelines governing how AI is developed and deployed by an organisation or government |

| DPDPA 2023 | India’s Digital Personal Data Protection Act — the primary legal framework governing data consent and use in India |

☑ You can name and define all Five Pillars of AI Ethics without looking ☑ You can explain at least two sources of AI bias with a real example each ☑ You can describe at least two bias mitigation strategies ☑ You can distinguish between AI ethics and AI law with one concrete example of each ☐ You can answer all viva questions on AI Ethics and compare two AI policies from different organisations without hesitation

🎓 Recommended by CBSE: Continue Learning on IBM SkillsBuild

The CBSE Class 11 AI curriculum recommends completing the “AI Ethics” course on IBM SkillsBuild alongside Unit 8.

Why this matters for you: Completing this course counts as your IBM SkillsBuild certification for the Practical File — a mandatory component worth marks in your Class 11 assessment.

👉 Access AI Ethics on IBM SkillsBuild →

Free to access. No signup fee. Completing it earns a digital badge you can include in your Practical File.

Practice Questions

Q1 (2 marks): What is AI bias? Give one example of how it can enter an AI system.

Model answer: AI bias is a systematic distortion in the output of an AI system that causes certain groups or outcomes to be treated unfairly — not because of a coding error, but because of patterns in training data or the design of the system itself. One example: a facial recognition system trained mostly on lighter-skinned faces will perform with higher error rates on darker skin tones — an instance of representation bias caused by underrepresentation in training data.

Examiner note: Full marks require both a definition and a named example. “AI can be biased” without explanation scores 0/2. Naming the type of bias (representation, historical, etc.) is not required for 2 marks but distinguishes a strong answer.

Q2 (4 marks): Explain any two pillars of AI Ethics with examples from the Indian context.

Model answer:

Transparency requires that AI systems be explainable to those affected by their decisions. In India, when the Aadhaar biometric authentication system rejects a citizen’s identity verification — preventing access to rations, banking, or government services — the person typically receives no explanation of why the rejection occurred. A transparent system would provide the reason, and a pathway for correction.

Accountability requires that when an AI system causes harm, there is a clear answer to the question of who is responsible. ISRO uses AI models to predict flood risks and crop failures — decisions with major consequences for millions of people. Accountability requires that these systems have defined owners, audit trails, and review processes when predictions prove wrong, rather than diffusing responsibility across the development team, the deploying agency, and the end users.

Examiner note: Each pillar scores 2 marks — 1 for definition, 1 for a correct and specific example. Generic examples (“used in healthcare”) score partial marks. Named, India-specific examples score full marks.

Q3 (MCQ): Which of the following is an example of historical bias in an AI system?

(a) A speech recognition system that performs worse in noisy environments (b) A hiring algorithm trained on past recruitment data that replicates gender-based selection patterns (c) A medical diagnosis system that recommends expensive treatments (d) A recommendation engine that suggests items similar to what a user has already purchased

Answer: (b)

Explanation: Historical bias occurs when training data reflects past discrimination or inequality. A hiring algorithm trained on past decisions that were themselves biased — for example, from a period when women were less frequently hired — will replicate those patterns. Options (a), (c), and (d) describe accuracy issues, cost issues, and recommendation logic — none of which are examples of historical bias.

Frequently Asked Questions

Q1: Is AI ethics part of the theory exam or only the practical?

In Class 9 and 10, ethics is embedded in the Project Cycle unit — you are expected to document ethical considerations in your project, which is assessed as part of project work. In Class 11, Unit 8 (AI Ethics and Values) carries 4 theory marks and 4 practical marks separately. This means you can be asked direct theory questions on the Five Pillars, bias, and AI policies — and also assessed on your practical activities (Moral Machine, Survival of the Best Fit, the comparative AI policy study). Do not treat ethics as purely practical content in Class 11.

Q2: Do I need to memorise all Five Pillars in order?

You do not need to memorise them in a specific order — but you need to know all five: Transparency, Fairness/Justice, Privacy, Accountability, and Safety and Reliability. Forgetting one costs you marks on any question that asks you to “list the five pillars.” A reliable memory technique: use the acronym TAPAS — Transparency, Accountability, Privacy, (F)Airness, Safety.

Q3: What is the difference between bias and error in an AI system?

An error is a wrong prediction that can happen to anyone — the model occasionally misclassifies a dog as a cat. Bias is a systematic pattern of wrong predictions that disproportionately affects a specific group — the model misclassifies dark-coloured cats at twice the rate of light-coloured cats. Errors are expected and measured by accuracy metrics. Bias requires specific fairness audits to detect, because it is hidden within overall accuracy numbers that may look acceptable.

Q4: The EU AI Act is not in the CBSE syllabus. Should I mention it?

The CBSE Class 11 practical activity requires a comparative study of AI policies from different organisations. The EU AI Act is a legitimate source for that comparison — it is the most comprehensive AI-specific regulation currently in force globally. You can mention it and compare it to India’s NITI Aayog strategy or UNESCO’s recommendation. Label any policy not explicitly named in your CBSE PDF as additional context, not as a required exam answer.

Q5: Will AI ethics actually matter when I am working in AI professionally?

Yes — and it matters earlier in a career than most students expect. AI development teams are now required to conduct bias audits, document ethical considerations in model cards, and demonstrate compliance with data protection regulations before systems go live. In India, the DPDPA 2023 creates direct legal obligations for anyone handling personal data in an AI system. Beyond compliance, the AI teams that build systems people actually trust — and that do not generate public backlash or regulatory action — are the ones that took ethics seriously from the design stage. Understanding ethics is not a soft skill addition to a technical career. It is a technical requirement.

Action Plan

Exam Practice Checklist

- [ ] Write out all Five Pillars from memory — name, one-sentence definition, one India example

- [ ] Explain historical bias and representation bias to yourself — without looking at notes

- [ ] List three bias mitigation strategies and name the scenario each is best suited for

- [ ] Distinguish AI ethics from AI law in two sentences

- [ ] Compare any two AI policies (NITI Aayog vs UNESCO, or IBM vs EU AI Act) — write three points of difference

- [ ] Complete the Moral Machine activity and write your practical file reflection

- [ ] Play Survival of the Best Fit and identify which bias type it demonstrates

CBSE Activity: Moral Machine and Survival of the Best Fit

Both activities are specified in the CBSE Class 11 Unit 8 syllabus. Complete both and document them in your practical file with: (a) what you did, (b) what you observed, (c) what ethical concept it demonstrated, and (d) your own reflection. Your examiner will ask about these in the viva.

Project Idea: AI Policy Comparison Chart

Build a structured comparison of three AI policies — NITI Aayog’s AI Strategy, UNESCO’s Recommendation on AI Ethics, and IBM’s AI Ethics Principles — across five dimensions: core values, enforcement mechanism, who it applies to, stance on bias, and stance on transparency. Present it as a table with a short analytical paragraph on which policy is most actionable for an Indian AI developer, and why. This is exactly the format the Class 11 practical requires — and doing it carefully, rather than copying, will show clearly in your viva.

The student who understands AI ethics the right way does not just score better in Unit 8. They are the AI developer who builds systems that people can actually trust — in a country where AI is already making decisions about health, money, and identity for hundreds of millions of people.