Your Class 10 AI practical exam has two viva components: a 5-mark viva on your Practical File, and a 5-mark viva on your Project Work. That’s 10 marks total — and most students leave them on the table because they never prepare specifically for viva questions.

This guide covers the 50 most important viva questions across all units of CBSE AI Code 417 (2025-26), with clear model answers that are short enough to say confidently and complete enough to score full marks.

What You’ll Learn

- The most asked viva questions from each Class 10 AI unit

- How to answer concisely without memorising paragraphs

- What your examiner is actually checking when they ask each question

How the Class 10 AI Viva Works

According to the CBSE 2025-26 curriculum (Code 417), Class 10 AI has two viva components under Part C:

Viva Voce (Practical File) — 5 marks: Based on the programs and activities in your practical file. Examiner will look at your file and ask questions about specific programs you submitted.

Viva Voce (Project Work) — 5 marks: Based on the project, field visit, or student portfolio you submitted. Questions focus on your chosen problem, data, and findings.

Questions typically last 10–15 minutes and are asked by an external examiner. They are drawn from your file and project, so you must be able to explain everything you submitted in your own words.

Unit 1 — AI Project Cycle & Ethical Frameworks (7 Marks in Theory)

Q1. What are the five stages of the AI Project Cycle? Problem Scoping, Data Acquisition, Data Exploration, Modelling, and Evaluation. Each stage must be completed before moving to the next — skipping stages leads to poor-quality AI systems.

Q2. What is Problem Scoping? Why does it come first? Problem Scoping is the stage where you clearly define what problem you want to solve, who is affected by it, and what success looks like. It comes first because every other decision — what data to collect, what model to use — depends on having a clear problem definition.

Q3. What is an Ethical Framework in AI? An ethical framework is a set of principles that guide how AI systems should be designed and used — ensuring they are fair, transparent, safe, and respectful of human rights. In Class 10, CBSE introduces frameworks like Bioethics and the My Goodness activity to help students evaluate AI dilemmas.

Q4. What is the difference between AI bias and privacy? AI bias means the model produces unfair results for certain groups — often because of imbalanced training data (example: a loan approval system that unfairly rejects applicants from certain regions). Privacy refers to protecting individuals’ personal data from being collected or used without consent (example: a health app sharing patient data with advertisers without permission). They are related concerns but different problems.

Q5. Give one real-world example of an AI ethics issue in India. The CoWIN vaccination database had a reported data leak in 2023, raising concerns about how health data is stored and protected. This is an example of privacy risk in a government AI system — a large volume of personal health records was potentially accessible without consent.

Unit 2 — Advanced Concepts of Modeling in AI (11 Marks)

Q6. What is the difference between AI, Machine Learning, and Deep Learning? AI is the broadest concept — machines performing tasks that require human intelligence. Machine Learning is a subset of AI where systems learn from data without being explicitly programmed for every task. Deep Learning is a subset of ML that uses neural networks with many layers to learn from very large datasets. Think of it as nested circles: AI contains ML, and ML contains Deep Learning.

Q7. What is supervised learning? Give an example. Supervised learning is when a model is trained on labelled data — each input has a known correct output. The model learns to map inputs to outputs. Example: training a spam filter on emails that have been labelled “spam” or “not spam” by humans.

Q8. What is unsupervised learning? How is it different from supervised learning? In unsupervised learning, the model is given data without labels and must discover patterns on its own. Example: grouping customers into segments based on purchasing habits, without telling the model how many groups to form or what they should represent. The key difference: supervised learning needs labelled data; unsupervised learning does not.

Q9. What is a Decision Tree? A Decision Tree is a supervised learning algorithm that makes decisions by asking a series of yes/no questions about the data, splitting it at each step, until it reaches a final prediction. It resembles a flowchart. Example: a medical decision tree might first ask “Does the patient have fever?” then “Is the temperature above 102°F?” and so on, until it reaches a diagnosis.

Q10. What is Linear Regression? When would you use it? Linear Regression finds the best straight line that represents the relationship between an input variable (x) and a continuous output variable (y). You would use it when you want to predict a numeric value — for example, predicting a student’s exam score based on the number of hours they studied.

Q11. What is Logistic Regression? How is it different from Linear Regression? Logistic Regression is used when the output is a category — usually binary (yes/no, pass/fail, spam/not spam). Unlike Linear Regression, which predicts a continuous number, Logistic Regression predicts the probability of belonging to a class. Example: predicting whether a loan application will be approved or rejected.

Q12. What is Reinforcement Learning? Reinforcement Learning is when an AI agent learns by interacting with an environment and receiving rewards for correct actions and penalties for incorrect ones. Over time, it learns to maximise its total reward. Example: an AI learning to play chess by playing thousands of games, winning and losing, until it develops an effective strategy.

Q13. What is a training dataset and a test dataset? The training dataset is the data used to teach (train) the model — the model learns patterns from this data. The test dataset is a separate set of data the model has never seen, used to evaluate how well the trained model performs on new inputs. You should never use test data during training — otherwise you cannot trust the evaluation results.

Unit 3 — Evaluating Models (10 Marks)

Q14. What is a Confusion Matrix? A Confusion Matrix is a table that shows the performance of a classification model by comparing predicted labels with actual labels. It has four values: True Positive (TP), True Negative (TN), False Positive (FP), and False Negative (FN). From these four values, you can calculate accuracy, precision, recall, and F1 score.

Q15. What is accuracy? What is its formula? Accuracy is the percentage of total predictions the model got right. Formula: Accuracy = (TP + TN) / (TP + TN + FP + FN) × 100

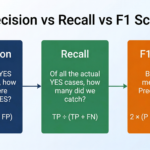

Q16. What is the difference between precision and recall? Precision measures what fraction of the model’s positive predictions were actually correct: Precision = TP / (TP + FP). Recall measures what fraction of all actual positives the model correctly identified: Recall = TP / (TP + FN). Example: in a cancer detection model, high recall is more important — you want to catch every real case, even if it means some false alarms.

Q17. What is the F1 Score? Why is it useful? The F1 Score is the harmonic mean of precision and recall: F1 = 2 × (Precision × Recall) / (Precision + Recall). It is useful when your dataset is imbalanced — for example, when 95% of cases are “not fraud” and only 5% are “fraud.” Accuracy alone would be misleading in this case; F1 Score gives a more complete picture.

Q18. What is overfitting? Overfitting is when a model learns the training data so thoroughly — including noise and irrelevant patterns — that it performs well on training data but poorly on new, unseen data. It is like a student who memorises exact exam questions but cannot answer a rephrased version of the same question.

Q19. What is underfitting? Underfitting is when a model is too simple to capture the underlying patterns in the data, performing poorly on both training data and new data. Example: trying to fit a straight line to data that has a curved relationship.

Q20. What is train-test split? What ratio is typically used? Train-test split is the process of dividing your dataset into two parts: one for training the model and one for evaluating it. A common ratio is 80:20 (80% training, 20% testing), though 70:30 is also used. This ensures the model is tested on data it has never seen during training.

Unit 4 — Statistical Data (Practical Only)

Note: Unit 4 Statistical Data is evaluated in practicals only — it does not have a theory marks allocation. However, examiners can and do ask about it in the practical viva.

Q21. What tools does CBSE recommend for Statistical Data analysis in Class 10? The CBSE 2025-26 curriculum prescribes Orange Data Mining and MS Excel for statistical data analysis. The practical includes the Palmer Penguins dataset in Orange and statistical analysis tasks in Excel.

Q22. What is the Palmer Penguins dataset used for in Orange? The Palmer Penguins dataset is used to practise data classification and visualisation using Orange Data Mining. It contains measurements (bill length, flipper length, body mass, etc.) of three penguin species. Students use it to build classification models and evaluate their performance without writing any code.

Q23. What is mean, median, and mode? Give an example where median is more useful than mean. Mean is the average; median is the middle value when data is sorted; mode is the most frequent value. Median is more useful than mean when data has extreme outliers. Example: if nine people earn ₹20,000/month and one person earns ₹20,00,000/month, the mean salary appears very high but misrepresents the typical earner — the median is a truer reflection of the “middle” income.

Unit 5 — Computer Vision (4 Marks)

Q24. What is Computer Vision? Computer Vision is the branch of AI that enables machines to interpret and understand visual information — images and videos — the way humans do. Applications include face recognition, object detection, medical imaging, and self-driving cars.

Q25. What is image classification? Image classification is the task of assigning a label or category to an image. Example: a model trained to classify X-ray images as “pneumonia detected” or “normal.” The model analyses patterns in pixel values to make this decision.

Q26. What is Teachable Machine? Have you used it? Teachable Machine is a free, no-code Google tool that allows users to train an image, sound, or pose classification model directly in a browser, without writing code. In Class 10 AI, it is commonly used for practical activities — for example, training a model to identify whether a student is sitting or standing using the device camera. Be ready to describe what you trained and what accuracy you achieved.

Q27. What is the difference between image classification and object detection? Image classification assigns a single label to an entire image (example: “this is a cat”). Object detection identifies and locates multiple objects within an image, drawing bounding boxes around each (example: “there is a cat at the top left and a dog at the bottom right”). Object detection is more complex.

Unit 6 — Natural Language Processing (8 Marks)

Q28. What is NLP? Give two applications. NLP (Natural Language Processing) is the branch of AI that processes and understands human language. Two applications: (1) Google Translate — translating text from one language to another; (2) Sentiment Analysis — determining whether a product review is positive, negative, or neutral.

Q29. What is tokenisation? Tokenisation is the process of splitting a piece of text into individual units called tokens — usually words or sentences. Example: the sentence “I love AI” would be tokenised into three tokens: [“I”, “love”, “AI”]. It is the first step in most NLP pipelines.

Q30. What is a Bag of Words (BoW) model? A Bag of Words is a representation that converts text into a numerical vector by counting how many times each word appears in the document, ignoring word order and grammar. Example: “The dog sat on the mat” and “The mat sat on the dog” would produce the same BoW vector — because BoW only counts words, not their sequence.

Q31. What is sentiment analysis? Give a real-world example. Sentiment analysis is the use of NLP to determine whether a piece of text expresses a positive, negative, or neutral opinion. Example: Zomato uses sentiment analysis to automatically categorise customer reviews — flagging negative feedback for follow-up and highlighting positive reviews in restaurant listings.

Q32. What is the difference between syntax and semantics in NLP? Syntax is about grammatical structure — whether a sentence is formed correctly according to language rules. Semantics is about meaning — what the sentence actually communicates. “Dogs barks loudly” has a syntax error (subject-verb disagreement). “The sky is green” is syntactically correct but semantically false.

Q33. What is a chatbot? What is the difference between a rule-based chatbot and an AI chatbot? A chatbot is a program designed to simulate human conversation. A rule-based chatbot follows pre-written if-then rules — it can only respond to specific inputs it was programmed for (example: a hotel booking bot with fixed menus). An AI chatbot uses machine learning and NLP to understand natural language and generate more flexible, context-aware responses (example: ChatGPT, Google Gemini).

Unit 7 — Advance Python (Practical Only)

Unit 7 is evaluated in practicals only, but viva questions are frequently drawn from Python programs in your practical file.

Q34. What is a DataFrame? A DataFrame is a two-dimensional, tabular data structure in Pandas — like a spreadsheet with rows and columns. Each column can hold a different data type. You create one using pd.DataFrame() and can load data from CSV files, dictionaries, or lists.

Q35. How do you check for missing values in a DataFrame? Using df.isnull().sum() — this returns the count of missing (null) values in each column. Once you know where the missing values are, you can either fill them (df.fillna()) or drop the affected rows (df.dropna()).

Q36. What does import pandas as pd mean? It imports the Pandas library and assigns it the alias pd. After this line, instead of typing pandas.DataFrame() every time, you can write pd.DataFrame() — shorter and more convenient. This is a convention followed by almost all Python developers.

Q37. What is the difference between df.head() and df.tail()? df.head(n) returns the first n rows of the DataFrame (default: 5). df.tail(n) returns the last n rows. They are used for quick inspection of a dataset when you open it — to check the structure and values without printing the entire dataset.

Q38. What is NumPy used for in AI? NumPy provides fast numerical computation for Python. It introduces the ndarray — a fast multi-dimensional array — and mathematical functions like np.mean(), np.std(), and np.dot(). Most ML libraries (like scikit-learn and TensorFlow) use NumPy arrays internally, so understanding NumPy is the foundation for AI programming.

Project Work Viva Questions

Q39. Describe your AI project in three sentences. Use this structure: (1) What problem you chose and which SDG it aligns with. (2) What data you used and how you collected it. (3) What model or solution you built, and what result it achieved.

Q40. What is the SDG your project is aligned with? What does that SDG aim to achieve? Be specific. Example: “Our project aligns with SDG 2 — Zero Hunger. It aims to end hunger and achieve food security. We built a crop yield prediction model to help small farmers estimate expected output based on rainfall and soil type.” Generic answers like “it helps people” will not score well.

Q41. What type of data did you use in your project? How did you collect it? Be specific about the data: numerical, categorical, image, or text. Mention your source — did you collect survey data, use a government open data portal like data.gov.in, download from Kaggle, or collect your own data at school or in your locality?

Q42. What challenge did you face while working on your project and how did you solve it? Examiners ask this to check genuine engagement. Prepare a real answer. Example: “Our dataset had many missing values in the rainfall column — we addressed this by filling them with the monthly average for that region using df.fillna().”

Q43. What would you improve if you had more time? Example: “We would collect data from more districts to make the model more accurate, and build a simple interface so farmers could use it directly on their mobile phones.”

Additional Questions (Questions 44–50)

Q44. What is the difference between classification and regression? Classification predicts a category (spam/not spam, pass/fail). Regression predicts a continuous number (exam score, house price). Both are supervised learning tasks.

Q45. What is an algorithm? An algorithm is a set of step-by-step instructions that a computer follows to solve a problem. In ML, an algorithm is the mathematical process the model uses to learn patterns from training data.

Q46. What is a label in machine learning? A label is the correct answer or output value associated with a training data point. In supervised learning, labels are what the model is trying to learn to predict. Example: in a dataset of fruit images, the label for each image is the fruit’s name — “apple,” “banana,” “mango.”

Q47. Give one difference between Computer Vision and NLP. Computer Vision deals with understanding visual data — images and videos. NLP deals with understanding text and spoken language. Both are domains of AI, but they use different types of input data and different model architectures.

Q48. What is data pre-processing? Why is it important? Data pre-processing is cleaning and preparing raw data before training a model — handling missing values, removing duplicates, normalising numbers, and encoding categories. Without it, even a good algorithm produces unreliable results. The common phrase is “garbage in, garbage out.”

Q49. What is Teachable Machine and how did you use it in your practical? (Customise with your actual practical activity.) Teachable Machine is a no-code Google tool where you upload sample images across categories, train a model in the browser, and immediately test it using your webcam. It demonstrates image classification without any coding.

Q50. If your model has 90% accuracy, is it a good model? Explain. Not necessarily. If 90% of your data belongs to one class (for example, 90% “not fraud”), a model that always predicts “not fraud” would have 90% accuracy while being completely useless. This is why you should also check precision, recall, and F1 score — especially for imbalanced datasets.

Quick Revision Box

| Term | One-Line Definition |

|---|---|

| Confusion Matrix | Table showing TP, TN, FP, FN to evaluate a classifier |

| Precision | Fraction of positive predictions that were actually correct |

| Recall | Fraction of actual positives the model correctly identified |

| Overfitting | Model memorises training data but fails on new data |

| Tokenisation | Splitting text into individual word or character units |

Practice Questions

Q1 (2-mark): What is the difference between overfitting and underfitting? How can each be addressed?

Model Answer: Overfitting occurs when a model learns training data too precisely — including noise — and performs poorly on new data. It can be addressed by using more diverse training data, simplifying the model, or applying regularisation techniques. Underfitting occurs when a model is too simple to capture the patterns in the data, performing poorly on both training and test data. It can be addressed by using a more complex model or training for longer. Both are signs of a model that does not generalise well.

Q2 (MCQ): In Class 10 AI (Code 417), the Viva Voce on Project Work carries how many marks?

a) 6 marks b) 10 marks c) 5 marks ✅ d) 15 marks

FAQ

Q1. Can the examiner ask questions from any unit, or only from what is in my practical file? Primarily from your practical file and project. However, examiners may ask related conceptual questions — for example, if your file includes a Confusion Matrix program, they may ask you to explain precision vs. recall. The safest approach: understand the concept behind every activity in your file.

Q2. What if I didn’t complete all activities in my practical file? Answer honestly if asked. Examiners appreciate students who say “I completed these five programs but couldn’t finish the Orange task” over students who make up answers. Your viva score reflects your understanding, not just your file’s completeness.

Q3. My project was done as a group. What if they ask me something my teammate handled? You are responsible for understanding the entire project, not just your individual part. Before the exam, make sure you can describe the full project, the data, the model, and the results — even the parts your teammates worked on. Read through your group’s full documentation at least once.