When you walk through an airport boarding gate in India, a camera may already know who you are — before you show your boarding pass.

What you’ll learn:

- How DigiYatra uses facial recognition AI across Indian airports

- What computer vision concepts from your CBSE syllabus are at work in this system

- How to analyse biometric AI through the CBSE ethics framework

What Is DigiYatra?

DigiYatra is a paperless boarding system launched by the Ministry of Civil Aviation and operationalised through DigiYatra Foundation — a joint venture involving the Airports Authority of India and several major private airport operators. It was piloted in 2022 and expanded to over 24 airports by 2024.

The system works as follows: a passenger registers on the DigiYatra mobile app, provides a selfie and their Aadhaar-linked details, and then at the airport, facial recognition cameras at entry gates, security checkpoints, and boarding gates match the passenger’s face against their pre-registered image. No physical boarding pass or ID document needs to be scanned at each point — the passenger’s face is the credential.

The stated goals are faster boarding, shorter queues, and reduced paper use. The government has positioned it as a flagship example of technology-driven citizen services.

The Computer Vision Inside DigiYatra

DigiYatra is a live deployment of the computer vision pipeline your CBSE curriculum covers. Understanding it technically is as important as analysing it ethically.

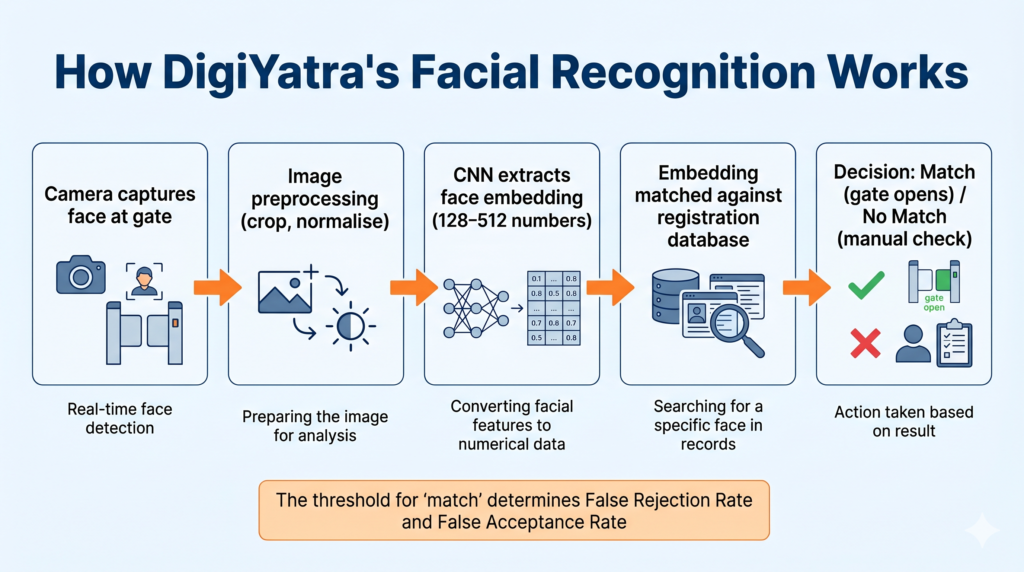

The core process follows the standard computer vision stages:

Image Acquisition: Cameras at airport checkpoints capture high-resolution face images in real time. Image quality and lighting conditions vary significantly between airports and time of day.

Preprocessing: Raw images are normalised — resized, cropped to the face region, adjusted for brightness and contrast — to create a standardised input for the recognition model.

Feature Extraction: A deep learning model (typically a convolutional neural network) extracts a unique numerical representation of the face — called a face embedding or face vector. This is not the image itself; it is a mathematical summary of the face’s geometric and texture features.

Matching: The extracted embedding is compared against the stored embedding from the passenger’s registration. If the cosine similarity (or Euclidean distance) between the two vectors falls within a defined threshold, the system declares a match and the gate opens.

Decision Output: Match (gate opens, passenger proceeds) or No Match (passenger is directed to manual check).

📌 Class 9 — Note: The core idea is simple: the system converts a face into a list of numbers, and compares those numbers to a stored list. A “match” means the numbers are similar enough.

📌 Class 10/11 — Note: This is a classification problem — the model classifies each face scan as “known passenger” or “not recognised.” The accuracy of this classification has direct real-world consequences.

📌 Class 12 — Note: The face embedding is a feature vector in high-dimensional space (typically 128 to 512 dimensions). Matching is a nearest-neighbour search. The threshold for what counts as “close enough” is a design parameter that directly determines the false acceptance rate and false rejection rate — key evaluation metrics from your Class 12 syllabus.

The CBSE Ethics Framework Applied to DigiYatra

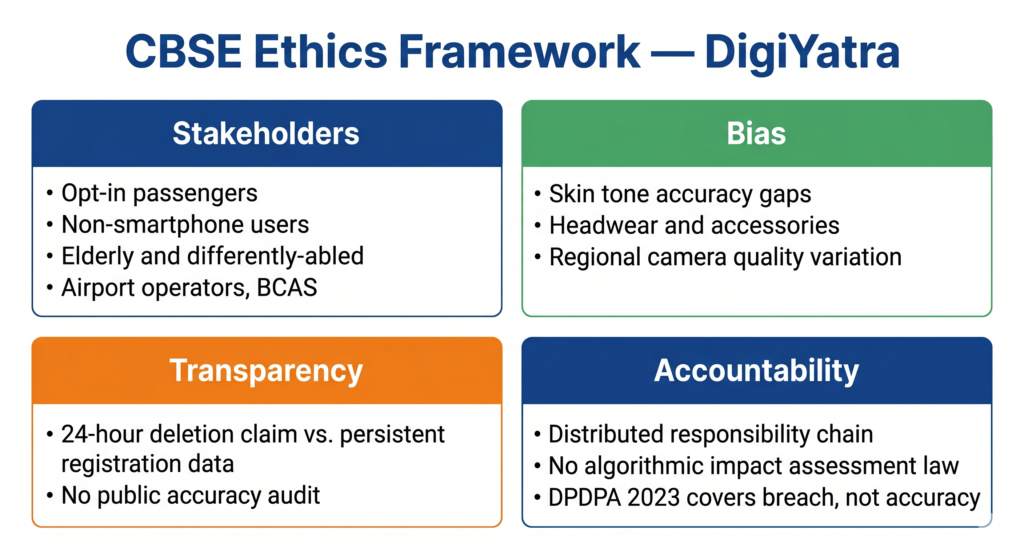

Stakeholders — Who Is Involved?

- Passengers who opt in — receive faster boarding; provide biometric data that is processed at multiple points during their journey

- Passengers who do not own smartphones or do not opt in — must use manual boarding processes; may face slower or different treatment depending on how airports implement the system

- Elderly and differently-abled passengers — facial recognition accuracy can be affected by age-related changes in facial structure, the use of accessories like glasses or masks, or limited ability to position oneself correctly in front of a camera

- Airport operators and airlines — benefit from reduced boarding time and queue management costs

- DigiYatra Foundation — the operating entity; holds passenger registration data

- BCAS (Bureau of Civil Aviation Security) — the regulatory body responsible for aviation security, which approved the system’s integration into airport security workflows

- Law enforcement — potential secondary users of airport facial recognition infrastructure, though DigiYatra’s stated scope is limited to boarding

Bias — Where Can Accuracy Fail Unequally?

Facial recognition accuracy is not uniform across populations. This is one of the most well-documented findings in AI ethics research globally, and it applies directly to DigiYatra.

Studies of major facial recognition systems (MIT Media Lab’s “Gender Shades” project is the most cited) found that error rates were significantly higher for darker-skinned individuals and for women compared to lighter-skinned men. India’s diverse population — spanning a very wide range of skin tones, facial structures, and ages — makes this bias problem especially acute.

Specific bias risks in DigiYatra:

- Skin tone variation: Training datasets used to build facial recognition models are frequently skewed toward lighter-skinned faces, leading to higher false rejection rates for darker-skinned passengers.

- Age and appearance change: A passenger who has aged, changed hairstyle, grown or removed facial hair, or gained or lost significant weight since registration may face higher rejection rates.

- Hijab and religious headwear: Passengers wearing dupatta, niqab, or turbans present a different framing than bare-headed registration photos — a known accuracy challenge.

- Camera angle and lighting: Economy class passengers boarding at smaller regional airports may face lower-quality camera infrastructure than those at major international terminals, creating an unequal accuracy experience.

A false rejection — being told by the gate that your face does not match — is not just a technical inconvenience. At an airport, it creates anxiety, delays, and requires intervention by security or airline staff. The burden of system error falls on the passenger, not on the system.

📌 Class 10 callout: False rejection is an example of false negative — the system incorrectly classifies a genuine passenger as a non-match. Your CBSE Class 10 syllabus covers false positives and false negatives in evaluation. DigiYatra is a real-world example of why these matter beyond the exam.

Transparency — What Do Passengers Actually Know?

DigiYatra is technically opt-in: passengers choose to register. But “opt-in” only means something meaningful if passengers have full information about what they are consenting to.

Several transparency concerns have been raised by researchers and civil liberties organisations:

- Data retention: The DigiYatra Foundation initially stated that facial data would not be stored after a flight — it would be deleted from the system within 24 hours. However, the registration data held centrally (the face embedding linked to Aadhaar details) persists. The distinction between “transaction data” and “registration data” is not obvious to a typical passenger.

- Third-party access: The terms of service specify what DigiYatra Foundation can do with data, but the system involves multiple vendors and airport operators. Whether the same restrictions apply uniformly to all entities in the chain is not publicly documented.

- Secondary law enforcement use: India does not currently have a law explicitly governing when and how law enforcement can access facial recognition data collected for civil purposes. This gap means passengers have no guaranteed protection against their airport face scan being used for purposes unrelated to boarding.

The government has not published an algorithmic audit of DigiYatra’s accuracy rates — broken down by demographic group — for public review.

Accountability — Who Answers When It Gets Wrong?

DigiYatra introduces a specific accountability challenge: distributed responsibility.

When a facial recognition system falsely rejects a passenger at a boarding gate, the chain of potential responsibility includes: the camera hardware manufacturer, the ML model developer, the DigiYatra Foundation (system operator), the specific airport operator, the airline, and the BCAS (regulatory approver). No single entity clearly owns the outcome.

India’s DPDPA 2023 establishes DigiYatra Foundation as a data fiduciary — responsible for protecting the biometric data it holds. If a data breach occurs, the Foundation must notify the Data Protection Board. However, the DPDPA does not currently mandate algorithmic impact assessments — there is no legal requirement to test and publish facial recognition accuracy before deployment.

The Right to Information Act theoretically allows citizens to request information about government-adjacent technology deployments, but obtaining detailed technical specifications from DigiYatra Foundation under RTI has not produced detailed algorithmic transparency in practice.

A Deeper Question: Opt-In Today, Mandatory Tomorrow?

DigiYatra is currently voluntary. This is the most common response to privacy concerns about facial recognition — “participation is your choice.”

But this framing deserves scrutiny. When an opt-in system is deployed at 24+ airports, with dedicated lanes for DigiYatra passengers and slower manual processing for non-participants, the practical cost of opting out rises steadily. If DigiYatra passengers board in 20 minutes and non-participants wait 50 minutes, the system is de facto mandatory for frequent travellers.

This pattern — voluntary in name, effectively compulsory in practice — has been observed in the expansion of Aadhaar-linked services, CoWIN registration, and FASTag on highways. It is not unique to India, but it is a well-documented feature of large-scale government digital infrastructure.

CBSE’s ethics curriculum asks you to think beyond what a system does today, to what it may become once infrastructure is in place. DigiYatra is a useful case for that exercise.

Quick Revision Box

| Term | One-Line Definition |

|---|---|

| Facial recognition | An AI process that identifies a person by analysing and comparing their facial features |

| Face embedding | A numerical vector (list of numbers) that represents the unique features of a face |

| False rejection (False Negative) | When a genuine user is incorrectly identified as not matching — the system denies them access wrongly |

| False acceptance (False Positive) | When an impostor is incorrectly identified as a match — the system grants access to the wrong person |

| Biometric data | Data derived from physical characteristics (face, fingerprint, iris) that uniquely identifies a person |

Practice Questions

2-mark question: DigiYatra uses facial recognition to match passengers at airport boarding gates. Identify one example of bias this system may exhibit and explain how it could affect a specific group of passengers.

Model answer: DigiYatra may exhibit skin tone bias — facial recognition models trained on datasets that underrepresent darker-skinned individuals tend to have higher false rejection rates for those passengers. In practice, this means a darker-skinned passenger has a statistically higher probability of being incorrectly told at the boarding gate that their face does not match their registration, forcing them to seek manual verification. The burden of the system’s error falls on the passenger, not the operator.

MCQ: DigiYatra’s facial recognition system incorrectly rejects a genuine passenger at the boarding gate. In evaluation terminology, this error is called a:

(a) True positive (b) False positive (c) False negative (d) True negative

Answer: (c) False negative — the system should have detected a genuine match (positive case) but instead classified it as a non-match.

Frequently Asked Questions

Q1. Is DigiYatra the same as CCTV surveillance at airports?

No, though both use cameras. CCTV records footage for retrospective review — you are not typically identified in real time by name or credential. DigiYatra is an active biometric identification system: it processes your face in real time, compares it against a database, and makes an access decision. The key difference is real-time identification and the linkage to personal records (Aadhaar, booking details). This distinction — surveillance versus active identification — is important in both legal and ethical analysis.

Q2. Does the CBSE syllabus specifically mention DigiYatra or facial recognition?

DigiYatra is not named in the CBSE AI syllabus PDFs. However, computer vision, facial recognition as a CV application, ethical analysis of AI systems, and the CBSE four-frame ethics approach (stakeholders, bias, transparency, accountability) are all syllabus topics. DigiYatra is an India-specific case study that lets you demonstrate syllabus knowledge using a contemporary real-world example — which is exactly what CBSE exam questions increasingly ask for.

Q3. Should I argue that DigiYatra is good or bad in an exam answer?

Neither. CBSE ethics questions ask for analysis, not advocacy. A strong exam answer presents the system’s stakeholders and benefits accurately, then identifies specific bias risks, transparency gaps, and accountability weaknesses using precise terminology. The goal is to demonstrate that you can apply the framework rigorously to a real case — not that you support or oppose the technology.