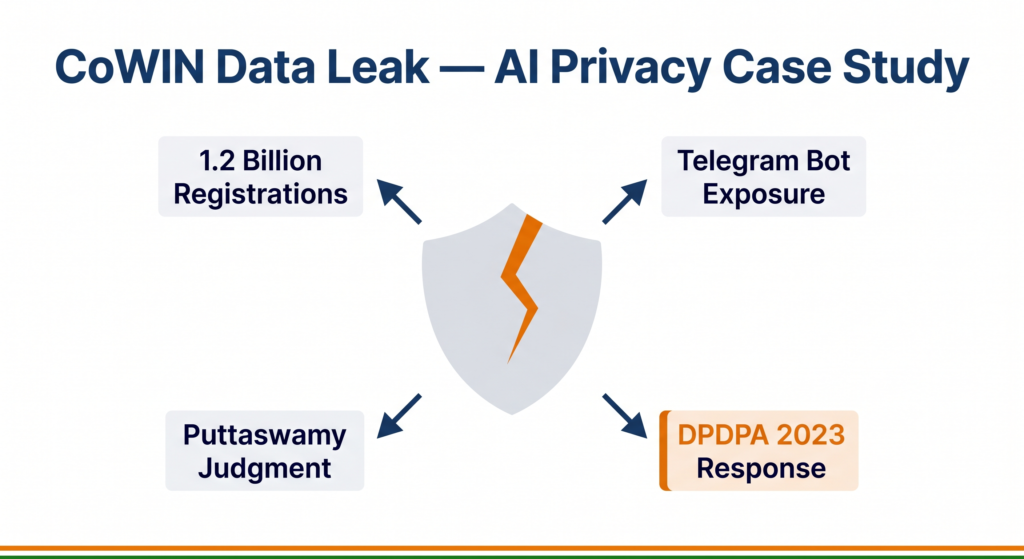

Hundreds of millions of Indians shared their personal health data to get vaccinated. In 2023, some of that data was found circulating on public Telegram channels — searchable by mobile number.

What you’ll learn:

- What happened in the CoWIN data leak and how AI systems were involved

- How to apply the CBSE ethics framework to a real health data breach

- Why India’s data protection law changed in direct response to cases like this one

What Was CoWIN?

Co-WIN (COVID Vaccine Intelligence Network) was the digital platform built by the Ministry of Health and Family Welfare and the National Informatics Centre (NIC) to manage India’s COVID-19 vaccination drive. At its peak, it handled registrations, slot bookings, and certificate generation for over one billion vaccination doses.

To use CoWIN, citizens provided: name, date of birth, mobile number, Aadhaar or passport number, and vaccination centre details. This data was stored centrally and used to generate digital vaccination certificates — the QR-coded documents that became required for travel, employment, and entry into public spaces.

For CBSE AI students, CoWIN is significant because it represents a government AI-backed health data system at the largest scale any country has ever attempted. Its security and privacy failures are equally large-scale — and directly relevant to the ethics framework your syllabus requires you to apply.

What Happened: The 2023 Data Leak

In June 2023, cybersecurity researchers and journalists reported that a Telegram bot was responding to queries with detailed personal records from what appeared to be the CoWIN database. A person’s mobile number could be entered into the bot, and the bot would return their name, date of birth, Aadhaar number, passport number, and vaccination centre details.

The volume of data was significant — reports indicated millions of records may have been accessible.

The government’s initial response was that the CoWIN database itself had not been directly breached. The CERT-In (Indian Computer Emergency Response Team) investigated and stated that the data appeared to have been sourced not from CoWIN’s central servers but from a third-party database that had previously accessed CoWIN data — possibly through healthcare workers’ login credentials that were compromised.

The Telegram bot was taken down following the disclosure. The full scope of the breach — how many records were accessed, who accessed them, and whether the data was further distributed — was never publicly confirmed.

The CBSE Ethics Framework Applied to CoWIN

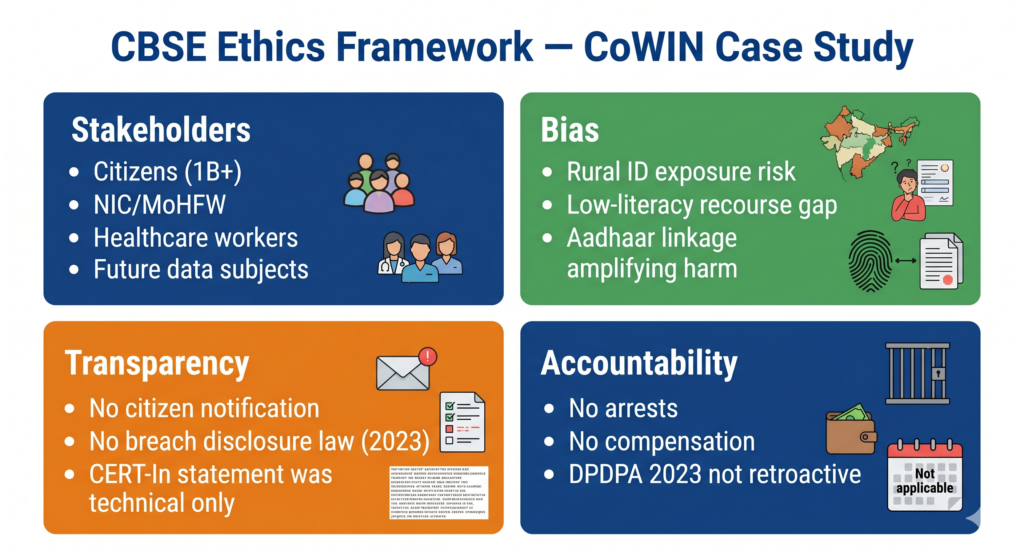

Stakeholders — Who Was Affected?

The CoWIN breach affected multiple groups with very different levels of power:

- Vaccinated citizens — over one billion registrations meant virtually every adult Indian was potentially a data subject. They had no meaningful choice: vaccination required CoWIN registration. Their biometric identifiers (Aadhaar number), health information (vaccination status), and location data (which centre they visited) were all exposed.

- Vulnerable populations — people in domestic abuse situations whose locations could be inferred from vaccination centre data; individuals whose HIV status, mental health conditions, or other sensitive information was cross-referenceable from related health records.

- Healthcare workers — whose login credentials may have been the initial point of compromise, making them both victims of the breach and, in a narrow technical sense, the vector through which it occurred.

- NIC and Ministry of Health — government entities responsible for the platform’s security architecture.

- Future data subjects — citizens who would share health data with government systems in future. The breach eroded trust in digital health infrastructure at precisely the moment India was trying to build its Ayushman Bharat Digital Mission (ABDM).

📌 Class 10 callout: Notice how the stakeholder analysis reveals that the people most harmed — ordinary citizens — had the least power. They could not opt out of CoWIN and had no direct recourse when their data was exposed. This is a recurring pattern in large-scale government AI systems.

Bias — Who Bears the Most Risk?

Data bias in the context of privacy breaches operates differently from predictive model bias. The question is not whether the system made biased predictions — it is whether the consequences of the breach fell unequally on different groups.

They did:

- Geographic bias in risk: Rural citizens who registered at local primary health centres often used ration card numbers or voter ID numbers as supplementary identifiers — data that, when combined with a name and village, makes a person uniquely and permanently identifiable in their community in ways that city-dwellers with pseudonymous digital identities are not.

- Literacy and recourse gap: A technically literate urban professional could, in theory, take steps to monitor whether their data was misused. A first-generation mobile user in a semi-urban area who registered for vaccination had no awareness that their data was being aggregated, no knowledge that it had been exposed, and no practical pathway to seek redress.

- Aadhaar linkage amplifying risk: Because CoWIN was linked to Aadhaar, the exposure of CoWIN data created a bridge to the broader Aadhaar-linked ecosystem — bank accounts, PAN cards, benefits payments. The breach was not isolated to vaccination records; it was a node in a larger data graph.

Transparency — Was the Public Informed?

The government’s communication after the breach was limited and delayed. No formal notification was sent to affected citizens. The CERT-In’s statement focused on technical attribution — “the CoWIN database was not directly breached” — rather than explaining what data had been exposed and what risks citizens faced.

India did not, at the time of the breach, have a comprehensive data protection law. The Personal Data Protection Bill had been withdrawn from Parliament in August 2022 and its replacement — the Digital Personal Data Protection Act (DPDPA), 2023 — was passed in August 2023, just weeks after the CoWIN breach. The absence of a breach notification requirement meant there was no legal obligation to inform citizens that their data had been compromised.

The transparency failure here was partly a legal gap, partly an institutional culture that prioritised managing reputation over informing the public.

Accountability — Who Was Held Responsible?

This is where the CoWIN case is most instructive — and most troubling.

No individual was arrested or charged in connection with the data breach. No government official faced formal accountability. NIC, which built and operated CoWIN, was not audited publicly. No compensation was offered to affected citizens.

The Telegram bot was taken down. The data, once circulated, could not be recalled.

The DPDPA 2023 was partly a response to the breach, establishing a Data Protection Board of India and creating legal obligations for data fiduciaries — organisations that collect and process personal data. Under the DPDPA, a breach of this scale would now trigger mandatory notification to the Board and potentially to affected individuals. Financial penalties are possible.

However, the law does not apply retroactively. Citizens whose CoWIN data was exposed in 2023 have no legal remedy under the DPDPA.

📌 Class 11/12 callout: The Puttaswamy judgment (Justice K.S. Puttaswamy vs Union of India, 2017) established that privacy is a fundamental right under the Indian Constitution. The CoWIN breach, and the inadequate government response to it, is one of the clearest post-Puttaswamy examples of what the absence of effective enforcement of that right looks like in practice.

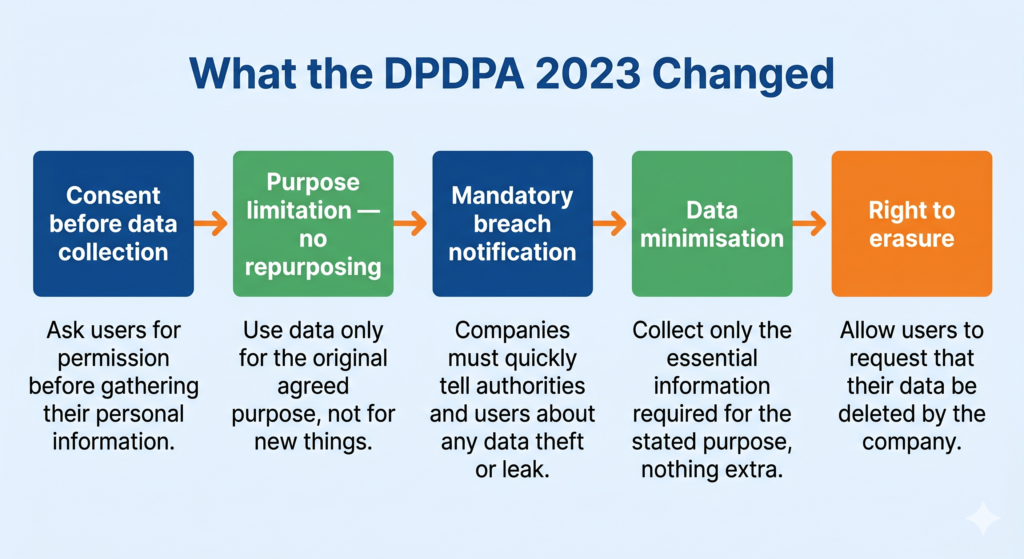

What Changed After CoWIN: The DPDPA 2023

The Digital Personal Data Protection Act, 2023 introduced several protections directly relevant to cases like CoWIN:

- Consent before collection: Organisations must obtain clear, informed consent before collecting personal data.

- Purpose limitation: Data collected for one purpose (vaccination) cannot be used for another without fresh consent.

- Breach notification: Data fiduciaries must notify the Data Protection Board of India promptly after becoming aware of a data breach.

- Data minimisation: Only the data strictly necessary for the stated purpose can be collected.

- Right to erasure: Citizens can request deletion of their data after the purpose for which it was collected has been fulfilled.

Whether these provisions would have prevented the CoWIN breach is debatable — the exposure may have originated in compromised third-party credentials rather than a policy failure. But they would have required faster, more transparent communication with affected citizens and created a pathway to accountability that did not exist in 2023.

Quick Revision Box

| Term | One-Line Definition |

|---|---|

| Data breach | Unauthorised access to or disclosure of personal data |

| Consent | Freely given, informed agreement to the collection and use of personal data |

| Purpose limitation | The principle that data collected for one specific reason must not be used for any other reason |

| DPDPA 2023 | India’s Digital Personal Data Protection Act — the law governing how organisations collect, use, and protect personal data |

| Data fiduciary | Any organisation that decides how and why personal data is processed; responsible for protecting it |

Practice Questions

2-mark question: Identify two stakeholders in the CoWIN data breach and explain one specific risk each stakeholder faced.

Model answer: First stakeholder — vaccinated citizens who were required to register on CoWIN to receive their vaccination. Their risk was that sensitive personal data including Aadhaar numbers and health records was potentially exposed to unknown third parties without their knowledge or consent. Second stakeholder — the National Informatics Centre (NIC), which built and operated CoWIN. Its risk was reputational and legal: if found to have failed its duty to protect citizen data, it faced public accountability and potential scrutiny from Parliament and oversight bodies.

MCQ: Under India’s DPDPA 2023, if an organisation collects personal data for vaccination registration, that data should not be used for another purpose such as targeted advertising. This principle is known as:

(a) Data minimisation (b) Breach notification (c) Purpose limitation (d) Right to erasure

Answer: (c) Purpose limitation — data collected for a stated purpose must not be repurposed without fresh consent.

Frequently Asked Questions

Q1. Was CoWIN itself an AI system?

CoWIN was primarily a digital registration and logistics platform rather than an AI decision-making system. However, AI and ML tools were used in adjacent systems — for instance, to detect fraudulent vaccination certificate generation, to optimise vaccine supply chain distribution, and to analyse uptake patterns for public health planning. The ethical analysis of CoWIN applies to any large-scale digital health data system, whether or not the core platform uses ML.

Q2. Does the Puttaswamy judgment apply to data breaches by private companies as well as the government?

The Puttaswamy judgment established privacy as a fundamental right under the Indian Constitution, which primarily governs the relationship between citizens and the state. However, the Supreme Court’s reasoning made clear that privacy has both a vertical dimension (protection from the state) and a horizontal dimension (expectation of privacy in one’s relationships and data more broadly). The DPDPA 2023 explicitly extends data protection obligations to private organisations, filling the gap the Puttaswamy judgment identified but could not itself resolve.

Q3. How should a student answer a CBSE exam question asking to “analyse” an AI ethics case?

Always structure your answer using the four-frame approach: identify the stakeholders (who is affected and how), examine potential bias (who bears disproportionate harm), assess transparency (was the public informed and could they understand the system), and evaluate accountability (was anyone held responsible and through what mechanism). A four-frame answer that uses specific facts — names, dates, laws — will score significantly better than a generic ethical opinion.