Your CBSE AI exam will not just ask you to define “bias” or “transparency.” It will give you a real system and ask you to analyse it. Aadhaar — the world’s largest biometric identity programme — is exactly the kind of case your examiner has in mind.

What You Will Learn

- How AI is used inside Aadhaar and what ethical questions it raises

- How to apply the CBSE 4-frame ethics analysis to any real system

- How India’s Supreme Court ruled on AI, identity, and the right to privacy

What Is Aadhaar and Where Does AI Fit In?

Aadhaar is a 12-digit unique identity number issued by the Unique Identification Authority of India (UIDAI) to every resident of India. As of 2024, over 1.38 billion Aadhaar numbers have been issued — making it the largest biometric database in the world.

The system does not simply store a number. It stores your fingerprints (all ten), iris scans (both eyes), and a photograph. When you authenticate — say, at a ration shop, a bank, or a government office — an AI-powered biometric matching system compares your live scan against the stored record and returns a yes or no decision in under a second.

That decision — made by a machine — determines whether you receive food, benefits, or services.

This is where AI ethics enters the picture.

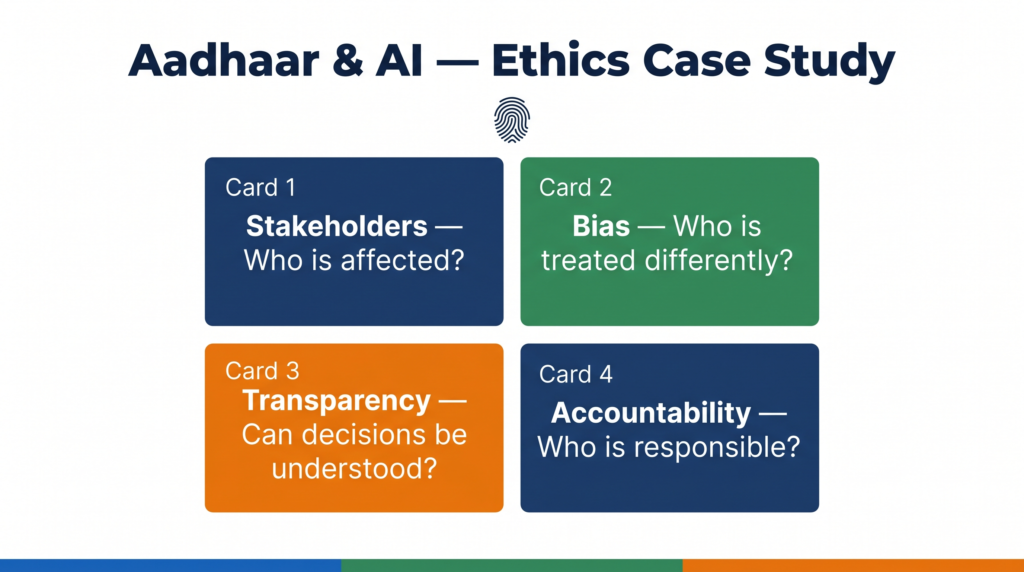

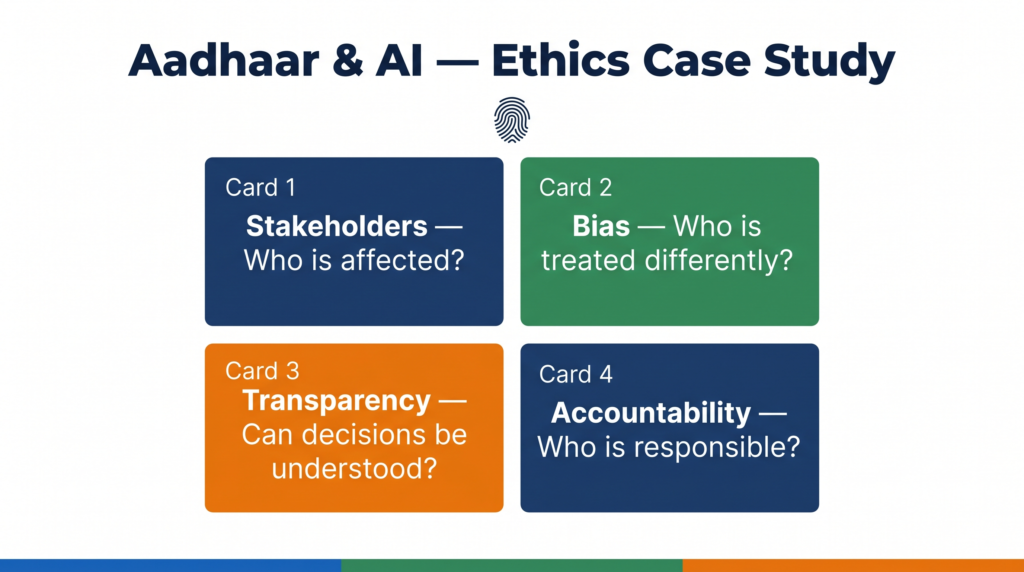

The CBSE 4-Frame Ethics Analysis

CBSE expects you to evaluate any AI system across four dimensions. Here is how each one applies to Aadhaar.

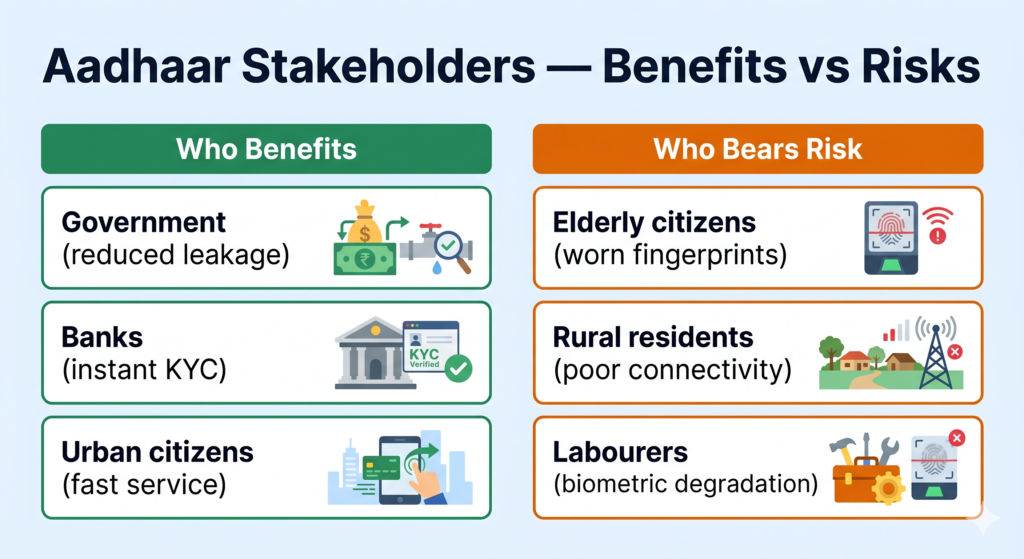

Frame 1 — Stakeholders: Who Is Affected?

A stakeholder is anyone who is affected by the system, whether or not they chose to be part of it.

Who benefits from Aadhaar AI?

- The Government of India — reduced subsidy leakages, faster welfare delivery

- Banks and telecom companies — instant KYC verification

- Urban, educated citizens with reliable internet and clean biometric records

Who bears the risks?

- Elderly citizens and manual labourers whose fingerprints have worn down from years of physical work — their biometrics may not match reliably

- People in rural areas with poor internet connectivity, where authentication failures have real consequences

- Individuals who were incorrectly de-duplicated or had their Aadhaar suspended due to algorithmic errors

The critical question CBSE wants you to ask is: are the people who bear the highest risk the same people who have the least power to challenge the system? In Aadhaar’s case, the answer is often yes.

Frame 2 — Bias: Does the System Treat Everyone Equally?

Bias in an AI system means it performs differently for different groups of people — not because of any deliberate decision, but because of patterns in the data it was trained on, or because of how the system was designed.

UIDAI’s own data has shown that biometric authentication failure rates are higher for:

- People over 60 years of age

- Agricultural workers, construction workers, and domestic workers (worn fingerprints)

- Women in some communities (lighter fingerprint ridges)

This is a form of demographic bias — the system works well for the people who are easiest to enrol, and works less well for people who need it most.

An important distinction for your exam: this bias is not intentional. UIDAI did not design the system to exclude elderly citizens. But a system can be discriminatory in its effect even when it is neutral in its intent. This is what AI ethics asks you to examine.

Frame 3 — Transparency: Can You Understand How the Decision Was Made?

Transparency in AI means that the people affected by a system’s decisions can understand, to a reasonable degree, how those decisions are made.

Aadhaar’s authentication is a black box for most citizens. When a biometric match fails, the system returns an error code. It does not explain why. It does not tell you whether your fingerprint was unclear, whether the scanner was faulty, or whether your record had been flagged. The citizen has no way to know.

For government welfare systems, this creates a serious problem. A farmer denied rations at a fair-price shop cannot challenge the decision because he cannot see the reason. The accountability loop is broken.

CBSE sample papers have asked questions framed exactly this way: “A farmer is denied food grains because Aadhaar authentication fails. Who is responsible? What should the system do differently?” The answer requires you to connect transparency to accountability — which is Frame 4.

Frame 4 — Accountability: When Something Goes Wrong, Who Is Responsible?

Accountability means that when an AI system causes harm, there is a clear mechanism to identify who is responsible and to fix the problem.

Aadhaar presents a multi-layered accountability challenge:

- UIDAI is responsible for the biometric database and authentication infrastructure

- The government department is responsible for the welfare scheme

- The local fair-price shop owner operates the point-of-sale scanner

- The private technology vendor supplied the matching algorithm

When a genuine beneficiary is denied food because of a biometric failure, each of these actors can point to another. UIDAI says the scanner was faulty. The department says authentication is UIDAI’s responsibility. The shop owner says he cannot override the system.

This is a real consequence of deploying AI in high-stakes government services without a clear grievance redressal mechanism. Several state governments — Jharkhand, Rajasthan — have documented cases of starvation deaths linked to Aadhaar authentication failures, which led to the Supreme Court intervening in 2018.

The Puttaswamy Judgment — When India’s Supreme Court Ruled on AI and Privacy

In August 2017, a nine-judge bench of India’s Supreme Court delivered a landmark ruling in Justice K.S. Puttaswamy vs Union of India. The court held unanimously that the right to privacy is a fundamental right under the Indian Constitution.

This judgment directly challenged Aadhaar because the government had argued that poor citizens do not have a meaningful privacy interest — they need subsidies more than they need privacy. The Supreme Court rejected this argument completely.

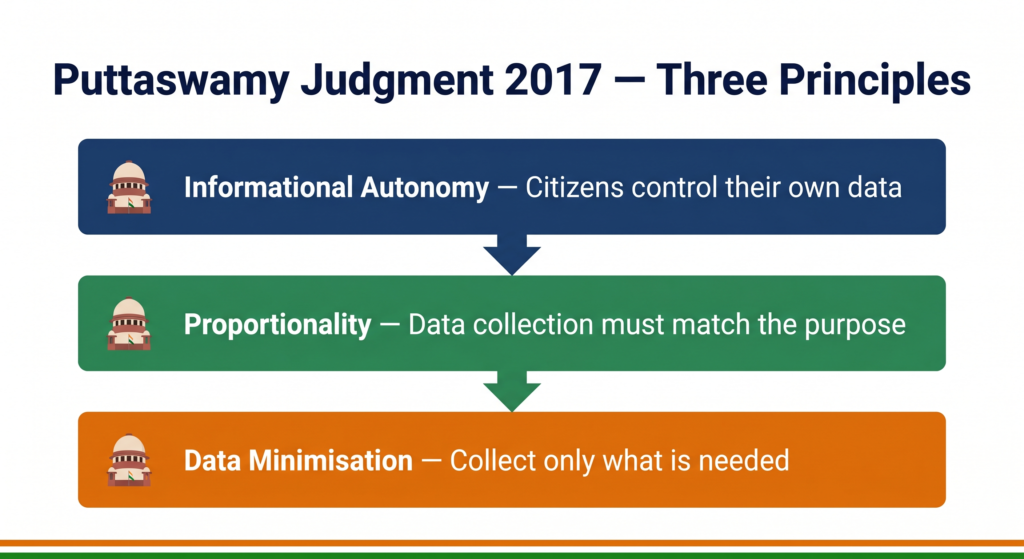

The ruling established three principles that matter for AI ethics:

Principle 1 — Informational autonomy. Citizens have the right to control information about themselves. A biometric database of 1.38 billion people, linked to bank accounts, mobile numbers, tax records, and welfare entitlements, creates a surveillance infrastructure that no democratic government should have unchecked access to.

Principle 2 — Proportionality. Even where data collection is necessary, it must be proportionate to the purpose. Collecting ten fingerprints and iris scans to verify identity for a ration card may not be proportionate when simpler forms of verification could work.

Principle 3 — Data minimisation. Collect only what you need, store it only as long as necessary, and use it only for the stated purpose. Aadhaar’s mission creep — expanding from welfare delivery to mandatory SIM card linking, school enrolment, and private sector KYC — violated this principle.

For your exam, the Puttaswamy case is the clearest example India has of a court applying ethical principles to an AI-powered government system. It is not just a legal case — it is an AI governance case.

What Could Have Been Done Differently?

This is a common CBSE question format: “Suggest two improvements to make this system more ethical.”

For Aadhaar, three directions are well-supported:

Build in a manual override from the start. No AI system is 100% accurate. Any authentication system used for welfare delivery should have a documented fallback — a process by which a genuine beneficiary can receive services even when biometric authentication fails. Several states introduced paper-based override systems only after deaths were reported. Ethics-by-design would have built this in before deployment.

Publish disaggregated error rates. UIDAI should publicly report authentication failure rates broken down by age group, gender, occupation, and geography. If elderly agricultural workers have a 15% failure rate and urban professionals have a 0.3% failure rate, that information should be visible. Transparency at the system level — not just the individual transaction level — is a form of accountability.

Separate the database from surveillance. The Puttaswamy ruling identified a legitimate concern: when a single number links your ration card, mobile number, bank account, income tax filing, and school enrolment, the state can track your movements and behaviour across every domain of life. Privacy-preserving AI design — using anonymised tokens for each use case rather than a single universal identifier — would provide the same verification benefits without creating a surveillance architecture.

Quick Revision Box

| Term | Meaning |

|---|---|

| Biometric authentication | Using physical characteristics (fingerprint, iris) to verify identity |

| Demographic bias | When an AI system performs differently for different groups of people |

| Transparency | The ability to understand how an AI system makes its decisions |

| Accountability | A clear mechanism to identify responsibility when AI causes harm |

| Right to privacy | Fundamental right upheld in the Puttaswamy judgment (2017) |

Practice Questions

2-mark question: Name two groups of people who face higher authentication failure rates in Aadhaar and give one reason why.

Model answer: Elderly citizens (reduced fingerprint clarity with age) and manual labourers such as agricultural workers (fingerprints worn down by physical work). In both cases, the biometric data degrades in ways the AI matching system was not designed to handle reliably.

MCQ: The Puttaswamy judgment (2017) established that:

(a) Aadhaar is unconstitutional and must be shut down (b) The right to privacy is a fundamental right under the Indian Constitution (c) Biometric data collection is not permitted in India (d) AI systems must be open-source to be transparent

Answer: (b) — The Supreme Court held that the right to privacy is a fundamental right, while allowing a modified form of Aadhaar to continue with restrictions.

Frequently Asked Questions

Q1. Is Aadhaar’s AI system biased on purpose?

No — and this is an important distinction your examiner will reward you for making. Aadhaar’s authentication system was not designed to exclude elderly or working-class citizens. The bias is an emergent problem: the system was designed and tested on data that did not adequately represent people with worn or unclear fingerprints. When it was deployed at scale, the failure rates for these groups became visible. Intentional bias and emergent bias are both ethical problems — but they require different solutions. Intentional bias requires policy change. Emergent bias requires redesign and ongoing monitoring.

Q2. If authentication fails, can a citizen appeal the decision?

This is precisely the accountability gap the Puttaswamy case and subsequent Supreme Court orders addressed. Prior to the 2018 ruling, there was no standard grievance mechanism. Following court directions and public pressure, UIDAI introduced an exception-handling process and the government allowed alternative identification for welfare delivery in some states. However, implementation remains inconsistent across India. The ethical lesson is that any AI system used in high-stakes decisions must have a built-in appeal and override mechanism before deployment — not added as an afterthought after harm occurs.

Q3. Does studying Aadhaar ethics matter for the CBSE AI exam?

Directly, yes. CBSE 2025-26 sample papers across Classes 9, 10, and 11 include case-based ethics questions that describe a real system and ask you to identify stakeholders, name a potential bias, or suggest an improvement. Aadhaar is the most likely India-specific case to appear because it combines biometric AI, government scale, data privacy, and a documented Supreme Court ruling — all of which map directly onto the AI ethics topics in your syllabus. Understanding this case gives you a ready framework you can apply to any system the examiner describes.