Every time a UPI transaction is blocked as suspicious, an AI made that decision — and in a small percentage of cases, that AI is wrong. Understanding who bears the cost of that error is what CBSE AI ethics is really asking you to examine.

What You Will Learn

- How AI is used in UPI fraud detection and what decisions it makes automatically

- How to apply the CBSE 4-frame ethics analysis to a payment system

- What happens when a fraud detection model has a high false positive rate

How UPI Works and Where AI Enters

Unified Payments Interface (UPI) is India’s real-time payment system, built and managed by the National Payments Corporation of India (NPCI). In 2023-24, UPI processed over 13,100 crore transactions worth more than ₹200 lakh crore — making it one of the largest digital payment networks in the world.

For a network this large to function, fraud detection cannot be done manually. NPCI and the banks connected to UPI use machine learning models that analyse every transaction in real time. These models examine factors such as:

- The transaction amount compared to the user’s history

- The time of day and location of the transaction

- Whether the recipient account has been flagged before

- How quickly a series of transactions is being made

Based on this analysis, the model assigns a risk score to the transaction. If the score crosses a threshold, the transaction is automatically blocked or flagged for review — in milliseconds, before the money moves.

This is AI making a consequential decision about your money, in real time, with no human in the loop.

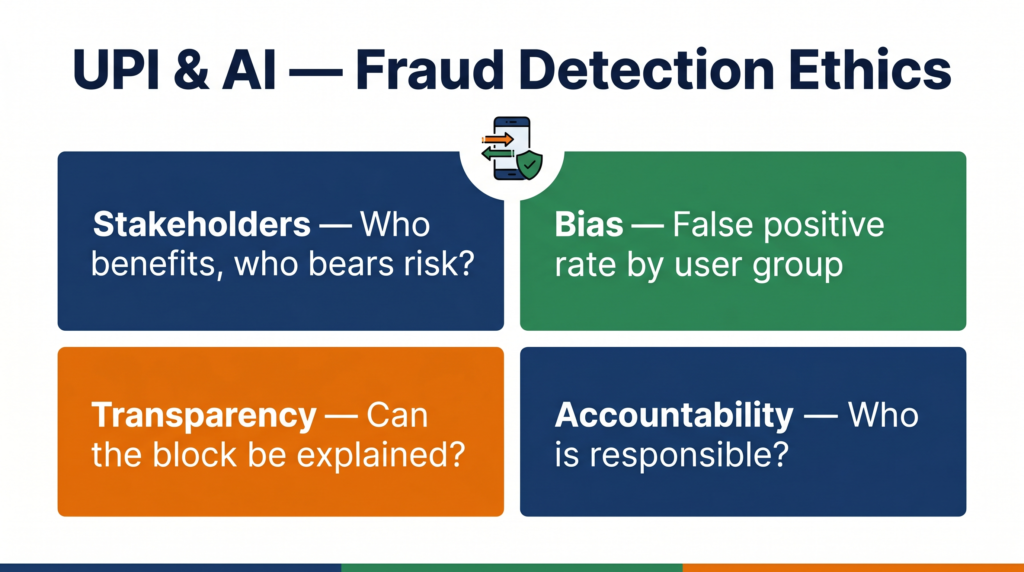

The CBSE 4-Frame Ethics Analysis

Frame 1 — Stakeholders: Who Is Affected?

Who benefits from UPI fraud detection AI?

- The banking system — reduced losses from fraud

- Merchants — fewer chargebacks and disputed transactions

- Most UPI users — their accounts are protected from fraudulent transactions

Who bears the risks?

- Legitimate users whose transactions are incorrectly blocked (false positives)

- Small businesses and street vendors who depend on UPI for daily income — a blocked transaction at a critical moment has real economic consequences

- Users in rural areas or those making unusual but legitimate transactions (e.g., a large first-time payment to a new recipient) who are more likely to trigger risk flags

An important stakeholder group: the unbanked and newly banked. India’s financial inclusion drive brought hundreds of millions of first-time bank account holders onto UPI. Their transaction histories are thin — which means the AI model has less data to distinguish their normal behaviour from suspicious activity. They are systematically underserved by a model trained primarily on established account behaviour.

Frame 2 — Bias: Does the System Treat Everyone Equally?

Bias in UPI fraud detection appears in a specific and measurable form: the false positive problem.

A false positive in fraud detection means the AI flagged a legitimate transaction as fraudulent. The model was wrong — but the transaction was still blocked.

False positive rates in payment fraud systems are not distributed equally across users. Research on payment fraud AI systems globally, and NPCI’s own challenge in scaling the system, shows that:

- New accounts with limited transaction history generate more false positives

- Transactions that break a user’s established pattern — even for innocent reasons — trigger alerts disproportionately

- Users who make many small transactions (daily wage earners, vegetable vendors, autorickshaw drivers) have different transaction patterns than the salaried users the model was predominantly trained on

This is a form of proxy discrimination: the model does not intend to treat informal sector workers differently, but their transaction profile is statistically different from the majority of training data, so they experience more friction.

The ethical question is not whether NPCI intended this bias. It did not. The question is whether NPCI is monitoring and measuring it — and what it is doing to correct it.

Frame 3 — Transparency: Can You Understand the Decision?

When your UPI transaction is blocked by the fraud detection system, what do you see?

Typically: a generic error message. “Transaction failed.” Sometimes: “Transaction declined for security reasons.” Rarely: a specific explanation of what triggered the block.

The underlying AI model’s reasoning is not visible to you, the bank’s customer service agent, or the merchant who did not receive your payment. This is a transparency failure with several consequences:

- You cannot identify whether the block was due to your behaviour or a system error

- The merchant cannot distinguish a fraud block from a technical failure

- Customer service cannot help you resolve the issue without escalating to the fraud team

- You cannot change your behaviour to avoid future false positives because you do not know what triggered this one

There is a deeper transparency issue here too. NPCI and the banks do not publicly publish their false positive rates, their model accuracy metrics, or the criteria that trigger an automatic block. This means there is no external accountability for whether the system is working fairly.

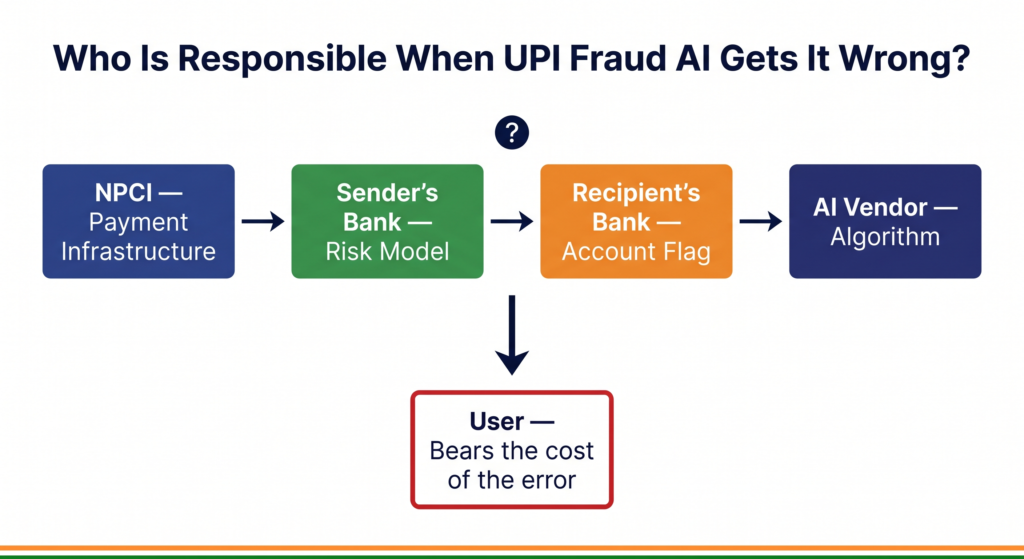

Frame 4 — Accountability: When Something Goes Wrong, Who Is Responsible?

Consider this scenario: a small business owner is trying to receive payment from a bulk buyer for ₹85,000 worth of goods. The UPI transaction is automatically blocked by the fraud AI. The buyer cannot complete the payment. The goods are not delivered. The business owner loses the contract.

Who is responsible?

- NPCI designed the payment infrastructure and the fraud detection framework

- The sender’s bank implemented the risk scoring model and set the blocking threshold

- The recipient’s bank may have flagged the recipient account independently

- The AI model vendor (if the bank uses a third-party fraud detection product) built the underlying system

Each party can point to another. The business owner has no single point of contact to dispute the decision, no right to know the reason it was blocked, and no timeline for resolution.

India’s Reserve Bank of India (RBI) issued guidelines in 2023 requiring banks to have grievance redressal mechanisms for declined digital transactions. However, the guidelines do not require banks to explain why a fraud AI blocked a transaction — only to resolve the complaint within a timeline. The accountability mechanism exists, but it does not close the transparency gap.

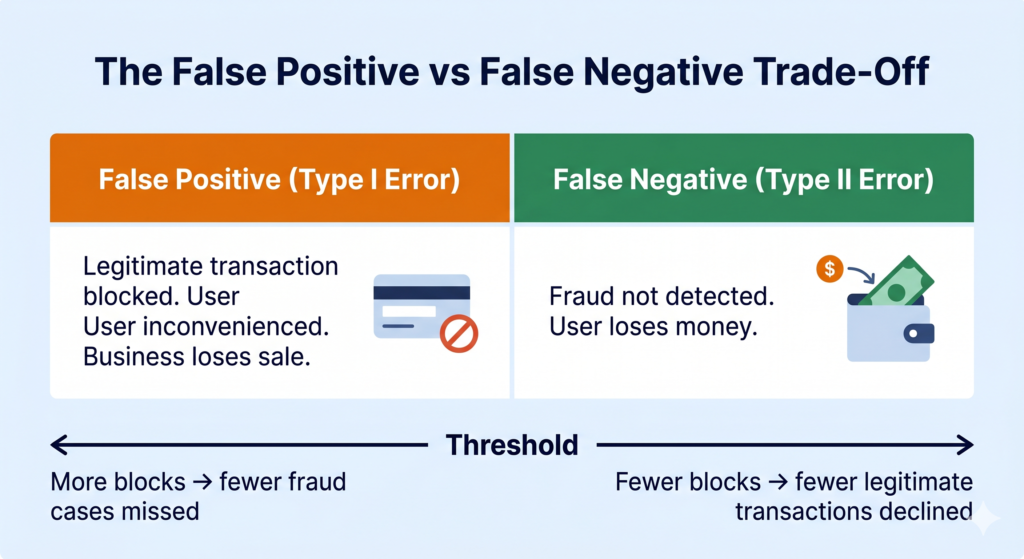

The False Positive vs False Negative Trade-Off

This is a concept your CBSE AI syllabus covers directly — and the UPI case makes it concrete.

In fraud detection, the model has to balance two types of errors:

False positive (Type I error): A legitimate transaction is flagged as fraud. The user is inconvenienced. The business loses a sale. Trust in the system is damaged.

False negative (Type II error): A fraudulent transaction is not flagged. Money is stolen. The user suffers real financial loss.

A model tuned to minimise false negatives (catch all fraud) will produce more false positives (block more legitimate transactions). A model tuned to minimise false positives will let more fraud through.

This is not a technical decision — it is an ethical one. When NPCI or a bank decides where to set the threshold, they are deciding whose inconvenience they are willing to accept. If the threshold is set conservatively (block more), the cost is paid by legitimate users who are incorrectly blocked. If the threshold is set loosely (block less), the cost is paid by fraud victims. The people making the threshold decision are not the same people who bear the cost.

For a 2-mark or 4-mark CBSE question on this case, this trade-off — and the question of who bears the cost of each error — is the core ethical insight the examiner is looking for.

What Could Have Been Done Differently?

Publish false positive rates by user segment. NPCI should publicly report what percentage of blocked transactions turn out to be legitimate — broken down by account age, transaction size, and geography. If this metric is not measured and published, there is no way to know whether the system is working fairly.

Build explainability into the block notification. When a transaction is blocked, the user and their bank should receive a reason code that is human-readable. “Transaction blocked: unusual amount for this account. Please retry in 24 hours or contact your bank” is more useful than “Transaction failed.” Even a general reason allows the user to take action and builds trust.

Create a fast-track appeal process for high-value business transactions. A ₹500 false positive is an inconvenience. An ₹85,000 false positive for a business is a crisis. RBI’s grievance guidelines should differentiate response timelines based on transaction value, and banks should have a direct escalation path for business accounts.

Quick Revision Box

| Term | Meaning |

|---|---|

| False positive | AI flags a legitimate event as fraudulent (Type I error) |

| False negative | AI misses an actual fraud event (Type II error) |

| Risk score | A number assigned by the AI model indicating how suspicious a transaction appears |

| Proxy discrimination | Unequal treatment resulting from a variable that correlates with a protected group |

| Explainability | The ability of an AI system to provide a reason for its decision |

Practice Questions

2-mark question: In UPI fraud detection, explain the difference between a false positive and a false negative. Which error is more harmful to a legitimate user?

Model answer: A false positive occurs when the AI incorrectly blocks a legitimate transaction, treating it as fraud. A false negative occurs when the AI fails to detect an actual fraudulent transaction. For a legitimate user, a false positive is more directly harmful — it prevents them from completing a valid payment and may cause financial or reputational damage, especially for businesses.

MCQ: A UPI fraud detection AI is set to a very conservative threshold, blocking any transaction that scores above 0.3 on a 0-to-1 risk scale. This is most likely to result in:

(a) Higher false negative rate and lower false positive rate (b) Lower false negative rate and higher false positive rate (c) Equal false positive and false negative rates (d) No change in either error rate

Answer: (b) — A conservative (low) threshold means more transactions are blocked. This catches more fraud (reduces false negatives) but also incorrectly blocks more legitimate transactions (increases false positives).

Frequently Asked Questions

Q1. If the fraud AI makes a mistake and blocks my payment, who should I complain to?

Under RBI’s 2023 digital payment grievance guidelines, your first point of contact is your own bank — the bank where the transaction originated. Banks are required to acknowledge complaints within 24 hours and resolve them within a defined timeline. If the bank does not resolve your complaint satisfactorily, you can escalate to the RBI Banking Ombudsman through the Centralised Public Grievance Redress and Monitoring System (CPGRAMS). However — and this is the ethical gap — you are unlikely to receive an explanation of why the AI blocked your transaction. You may receive a resolution (the transaction unblocked) but not transparency.

Q2. Why don’t banks simply make their fraud detection models public so users can understand them?

Banks argue that publishing the criteria used by fraud detection models would help fraudsters reverse-engineer the system and find ways to avoid detection. This is a legitimate concern — it is the transparency-security trade-off. However, ethical AI design offers a middle path: internal audits by independent third parties, summary statistics published by regulators, and user-facing explanations that are informative without being exploitable. The choice is not between full transparency and full opacity — it is about designing transparency mechanisms that serve users without compromising the system’s effectiveness.

Q3. How does UPI fraud detection connect to the AI concepts I am studying in CBSE?

Directly. The fraud detection system is a classification model — it classifies each transaction as either “legitimate” or “suspicious.” The false positive and false negative rates are exactly the precision and recall metrics your Class 10 and Class 11 syllabus covers. The threshold tuning decision is a real-world example of how changing the classification boundary changes both metrics simultaneously. When you study the confusion matrix in your CBSE AI course, the UPI fraud case is the clearest practical example of why those four numbers — true positive, true negative, false positive, false negative — matter beyond the exam.