Most students assume AI is neutral — it just processes data. The MARVEL surveillance system in Maharashtra is one of the most important Indian case studies that proves otherwise.

What you’ll learn in this post:

- What predictive policing is and how AI systems are used in law enforcement

- A CBSE 4-frame ethics analysis of the MARVEL system (Stakeholders / Bias / Transparency / Accountability)

- How to answer high-level case-based ethics questions for Class 11 and Class 12 boards

What Is Predictive Policing?

Predictive policing uses AI and machine learning to forecast where crimes are likely to occur, or to identify individuals considered “at risk” of committing crimes — before any crime has taken place.

The AI model takes historical crime data, location data, time patterns, and sometimes demographic data as inputs. It outputs a risk score or a “hotspot map” that police use to allocate patrol resources or flag individuals for monitoring.

Globally, systems like PredPol (USA) and ShotSpotter have faced intense scrutiny. In India, the most documented example is the MARVEL system (Mass Video Surveillance with AI-based Recognition for Law Enforcement) — a video analytics and facial recognition network piloted in Maharashtra.

The MARVEL System: What It Does

MARVEL was developed with support from the Maharashtra Remote Sensing Application Centre (MRSAC) and deployed as part of Maharashtra’s Smart City and Safe City initiatives. It integrates:

- CCTV camera networks across urban areas

- Facial recognition matched against police databases

- Vehicle recognition (number plate scanning)

- Crowd behaviour analytics — flagging unusual crowd movements or gatherings

- Predictive hotspot mapping — identifying locations and time windows with elevated crime probability based on historical data

The stated goal is crime prevention and faster law enforcement response. But the way the system works raises each of the four ethical questions your CBSE syllabus requires you to examine.

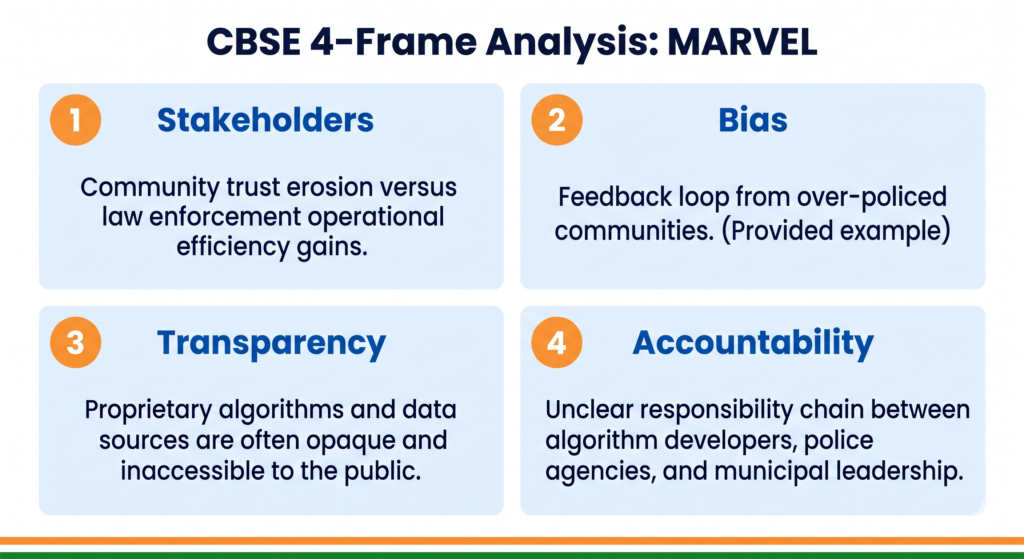

The CBSE 4-Frame Ethics Analysis

Frame 1 — Stakeholders: Who Is Affected?

| Stakeholder | How they are affected |

|---|---|

| General public in surveilled areas | Continuous monitoring without individual consent; chilling effect on public behaviour |

| Marginalised communities (Dalits, minorities, migrant workers) | Historical crime data reflects past policing patterns, which were themselves biased |

| Individuals wrongly flagged | Face harassment, detention, or investigation based on algorithmic error |

| Police officers | Receive AI-generated alerts and must decide whether to act — their discretion is replaced by algorithmic nudges |

| Victims of crime | May benefit from faster police response if the system works accurately |

| Civil liberties organisations | Institutional stakeholders raising accountability questions |

| Technology vendors | Private companies supplying surveillance hardware and AI software |

The key stakeholder tension: The people most monitored — those in high-crime-rate neighbourhoods — are often the same communities that were historically over-policed. MARVEL does not start with a blank slate. It inherits the biases of decades of policing data.

Frame 2 — Bias: Where Does It Enter?

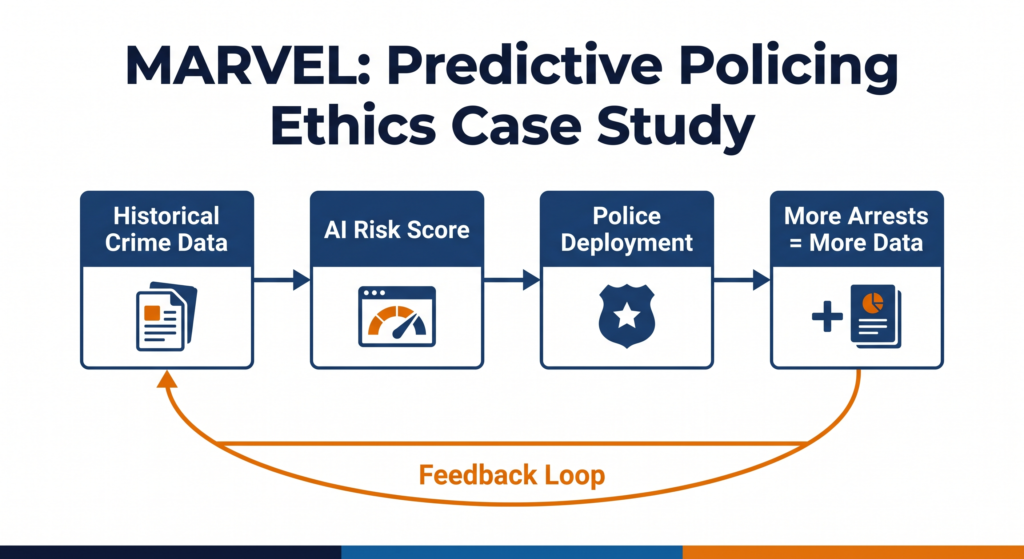

Historical data bias — the feedback loop problem

MARVEL’s predictive models are trained on past crime records. But crime records do not reflect where crime actually happens — they reflect where police looked for crime. Communities that were historically over-policed have more arrests in the data. The AI learns: “this community = higher crime risk.” It then sends more police there. More police presence means more arrests. More arrests reinforce the prediction. The bias compounds across each cycle.

This is called a feedback loop bias — and it is one of the most dangerous forms of algorithmic bias because it looks like evidence but is actually self-fulfilling.

Facial recognition bias

Multiple global studies have shown facial recognition models have significantly higher error rates for darker-skinned individuals and women. India-specific audits of facial recognition systems used in policing have raised similar concerns. For MARVEL, this means the communities most likely to be wrongly identified are those with the least institutional power to challenge the error.

Label bias in training data

Historical crime data labels individuals as “suspects” or “criminals” based on past arrests — many of which may have been wrongful, later acquitted, or based on now-repealed laws. MARVEL’s models trained on this data inherit every wrongful label embedded in it.

Exam definition to remember: Feedback loop bias occurs when an AI system’s outputs influence the data used to train future versions of the system, causing the original bias to compound over time.

Frame 3 — Transparency: Can Citizens Understand the System?

What is not publicly available about MARVEL:

- The specific algorithm used to generate risk scores

- The accuracy rates, especially broken down by demographics

- The threshold at which an alert triggers police action

- Whether flagged individuals are notified that they were monitored

- The process for challenging a wrongful identification

For ordinary citizens in Maharashtra, MARVEL is a system that watches them, scores them, and can trigger police attention — entirely without their knowledge of how the process works.

The consent gap:

Facial recognition in public spaces collects biometric data from every person who passes a camera — without consent. India’s DPDPA 2023 requires consent for personal data processing, but public surveillance systems operated by government agencies occupy a complex legal grey area that has not yet been fully resolved.

Why transparency matters for public trust:

An AI system in law enforcement must be explainable to courts. If a police officer acts on a MARVEL alert that leads to wrongful detention, and the algorithm’s reasoning cannot be explained, the legal accountability chain breaks. This is not just an ethics problem — it is a rule-of-law problem.

Frame 4 — Accountability: Who Is Responsible?

The accountability gap in MARVEL:

When a MARVEL alert leads to wrongful harassment of an innocent person, responsibility is split across:

- The technology vendor (who built the algorithm)

- MRSAC (which developed the system)

- The Maharashtra Police (which acted on the alert)

- The municipal authority (which funded the deployment)

No single body has publicly stated responsibility for algorithmic errors. No public redress mechanism exists for individuals wrongly flagged.

The constitutional dimension:

The Supreme Court’s Puttaswamy judgment (2017) established privacy as a fundamental right under the Indian Constitution. Continuous biometric surveillance of citizens without consent, without transparency, and without a redress mechanism raises a direct constitutional question. Legal scholars and civil society organisations including the Internet Freedom Foundation have flagged MARVEL-type systems on exactly these grounds.

What responsible accountability looks like:

- An independent algorithmic audit by a body not connected to the vendor or the police

- A public error-rate report, broken down by location, demographic, and time period

- A formal grievance mechanism for wrongful identification

- Sunset clauses — automatic review periods after which deployment must be reauthorised based on evidence of effectiveness and fairness

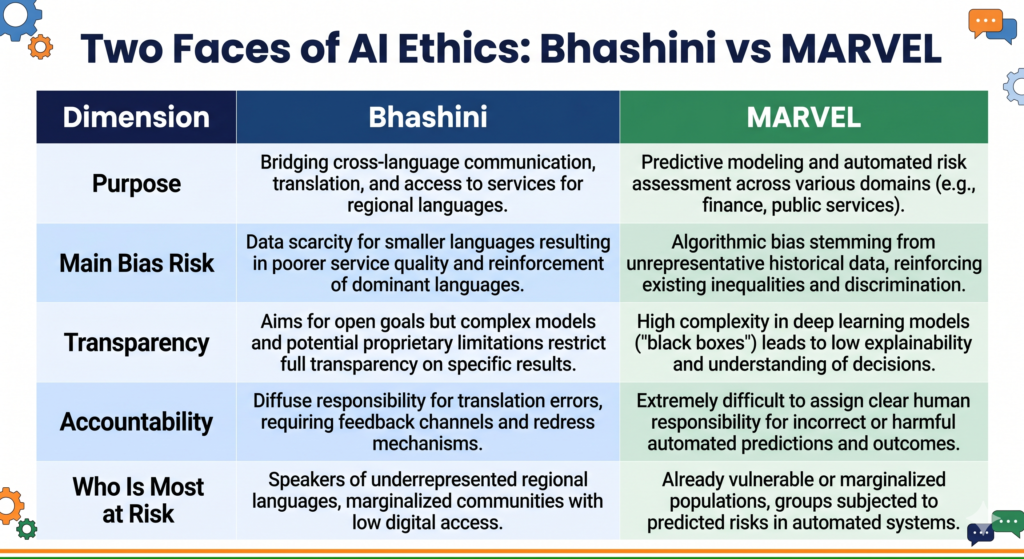

Comparing Bhashini and MARVEL: Two Faces of AI Ethics

Your CBSE syllabus asks you to understand that AI ethics is not about one type of harm. These two case studies show how the same four ethical questions apply very differently across use cases.

| Dimension | Bhashini | MARVEL |

|---|---|---|

| Primary purpose | Inclusion — bring citizens into digital services | Surveillance — monitor and predict criminal behaviour |

| Main bias risk | Data gap for underrepresented languages | Feedback loop from historically biased policing |

| Transparency level | Higher — open-source, published benchmarks | Lower — algorithm and thresholds not public |

| Accountability challenge | Fragmented across MeitY, AI4Bharat, app developers | Fragmented across vendor, MRSAC, police, municipal authority |

| Constitutional angle | Digital inclusion (right to access) | Right to privacy (Puttaswamy judgment) |

| Who is most at risk | Tribal and rural communities | Marginalised communities in surveilled neighbourhoods |

Quick Revision Box

| Term | Meaning |

|---|---|

| Predictive policing | Using AI to forecast crime location or individual risk before a crime occurs |

| Feedback loop bias | Bias that compounds when AI output influences its own future training data |

| Biometric surveillance | Monitoring using physical identifiers — face, fingerprint, iris |

| Puttaswamy judgment | 2017 Supreme Court ruling establishing privacy as a fundamental right in India |

| Algorithmic accountability | Clear, public responsibility for decisions made or influenced by an AI system |

Practice Questions

2-mark question: What is feedback loop bias? Give one example from predictive policing.

Model answer: Feedback loop bias occurs when an AI system’s outputs influence the data used to retrain it, causing the original bias to grow stronger over time. In predictive policing, if an AI sends more police to a particular neighbourhood (because historical data flagged it as high-risk), more arrests occur there, reinforcing the prediction — even if the original data reflected biased policing rather than actual higher crime rates.

MCQ: The Puttaswamy judgment (2017) is relevant to MARVEL-type AI systems because:

(A) It established rules for AI procurement by government agencies (B) It recognised privacy as a fundamental right under the Indian Constitution (C) It banned the use of facial recognition in public spaces (D) It required all AI systems to be open-source

Answer: (B)

FAQ

Q1. Is predictive policing used only in Maharashtra? No. Several Indian states have piloted AI-assisted policing and surveillance systems. Telangana’s Hawk-Eye system and Delhi Police’s use of facial recognition during protests have also been documented and debated. Maharashtra’s MARVEL is one of the most formally named and studied examples, which makes it useful for exam case studies, but the ethical questions apply to all such deployments.

Q2. Does the CBSE syllabus expect me to oppose AI in policing? No. The CBSE AI ethics framework asks you to analyse, not to take sides. A strong exam answer identifies both the intended benefits (faster police response, resource allocation) and the ethical risks (bias, privacy violation, lack of accountability), and proposes specific responsible design improvements. Balanced analysis with structured evidence always scores higher than one-sided opinion.

Q3. How should I structure my answer if a MARVEL-type case study appears in my board exam? Use the 4-frame structure: open with a one-line system description, then cover Stakeholders (who is affected and how), Bias (where it enters and why), Transparency (what citizens cannot know), and Accountability (who is responsible and what is missing). Close with one or two responsible AI recommendations. This structure is directly aligned to CBSE’s ethics assessment criteria for Class 11 and Class 12.