Unit 7 carries 7 marks in your Class 12 AI theory paper and was added to the 2025-26 syllabus for the first time. No major textbook covers it adequately yet. This post covers every sub-topic CBSE expects you to know — so you are not left guessing what to study or how deep to go.

What You Will Learn

- How Generative AI works and what makes it different from traditional AI

- The difference between Generative and Discriminative models (a direct exam question)

- What an LLM is and why it matters in the context of Unit 7

- The ethical and social concerns CBSE expects you to discuss in answers

- How to use Google Gemini as a hands-on CBSE activity for your practical file

What Is Generative AI?

Most AI systems you have studied so far — spam filters, image classifiers, fraud detectors — take an input and predict a label or output from a fixed set of possibilities. They analyse data that already exists.

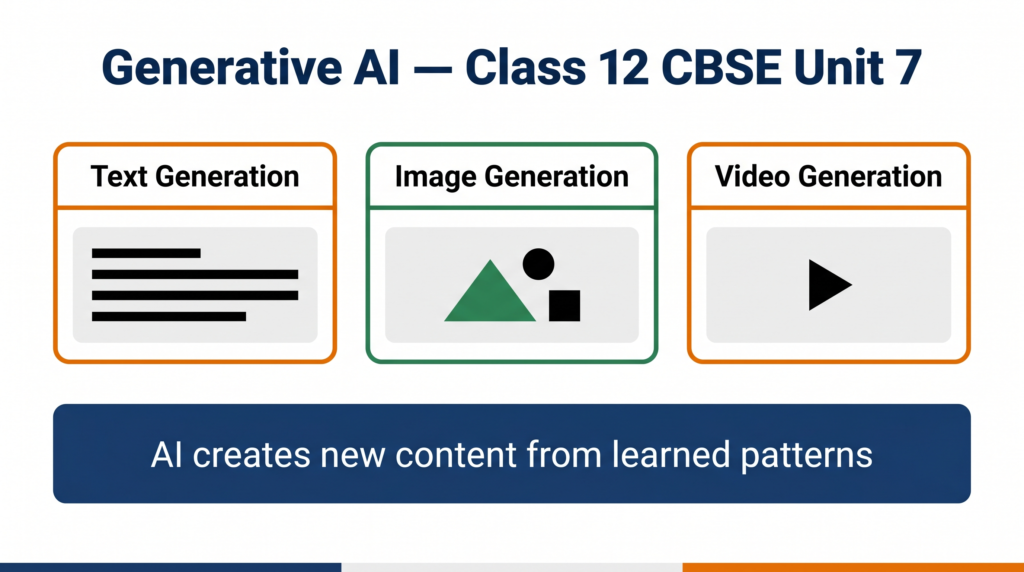

Generative AI does something fundamentally different. It creates new content — text, images, audio, video, or code — that did not exist before. It learns patterns from vast amounts of training data and then uses those patterns to generate original outputs on demand.

When you type a question into Google Gemini and it writes a paragraph in response, that paragraph was not stored anywhere. It was generated — token by token — based on patterns the model learned during training. That process is the core of Generative AI.

India context: India’s IndiaAI Mission, backed by a ₹10,372 crore government investment, is specifically focused on building foundation models — the large-scale Generative AI systems that power tools like Gemini and ChatGPT. When CBSE added Unit 7 to the Class 12 syllabus, it was responding directly to this national priority.

How Generative AI Works

Generative AI systems follow a three-phase process:

Phase 1 — Training on large datasets The model is trained on enormous amounts of data — billions of text documents, millions of images, or years of audio recordings depending on the type of model. During training, it learns statistical patterns: which words tend to follow other words, which pixel patterns make up a face, which chord progressions appear in music.

Phase 2 — Learning a compressed representation The model does not memorise its training data. Instead, it builds a compressed mathematical representation of the patterns it has seen. This representation — stored as billions of numerical parameters called weights — is what allows the model to generalise and generate new content.

Phase 3 — Generating new content from a prompt When you give the model a prompt — a text instruction, an image, or a starting sentence — it uses its learned representation to generate a response that fits the patterns. A text model predicts the most likely next word, then the next, then the next, building a coherent output step by step.

Generative AI vs Discriminative AI

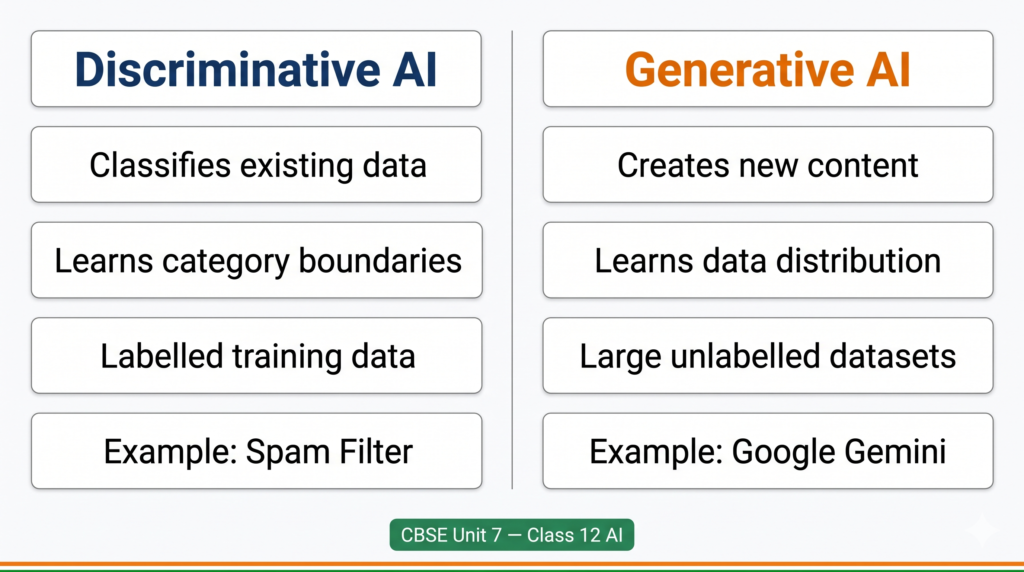

This comparison is a direct CBSE exam question. Know it precisely.

| Aspect | Generative AI | Discriminative AI |

|---|---|---|

| Core task | Creates new data (text, images, audio) | Classifies or labels existing data |

| What it learns | The underlying distribution of the data | The boundary between categories |

| Output | New content — a poem, an image, a paragraph | A prediction — spam/not spam, cat/dog |

| Training data | Massive unlabelled datasets | Labelled datasets |

| Example | Google Gemini writing a summary | A spam filter categorising your email |

| CBSE example | Using Canva AI to generate a poster | A confusion matrix evaluating a classifier |

How to remember this for the exam: Discriminative models discriminate — they draw a line between categories. Generative models generate — they produce something new. A camera that labels photos as “blur” or “clear” is discriminative. A camera that creates a new photo from a text description is generative.

Large Language Models (LLMs)

The CBSE syllabus explicitly names LLMs as a sub-unit of Unit 7. Here is what you need to know.

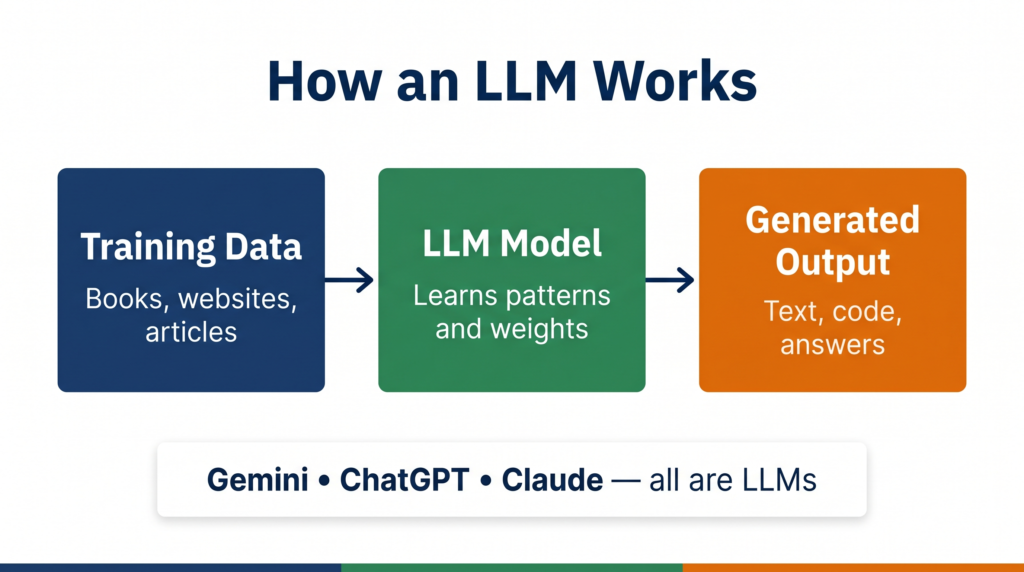

A Large Language Model is a type of Generative AI model trained specifically on text data — books, websites, articles, code — at a scale of hundreds of billions of parameters. The word “large” refers to both the size of the training data and the number of parameters in the model.

Key characteristics of LLMs:

- They predict the next token (word or word-fragment) in a sequence, one step at a time

- They are trained using self-supervised learning — no human labels required, the model learns from the structure of language itself

- They can perform many different tasks — writing, summarising, translating, answering questions — without being explicitly trained for each one

- They are the technology behind ChatGPT (OpenAI), Gemini (Google), and Claude (Anthropic)

Why LLMs matter for your exam: The CBSE practical activity specifically asks you to “use Google Gemini to craft prompts and generate text outputs.” Gemini is an LLM. Understanding what an LLM is helps you explain why your practical activity works the way it does — which is the kind of depth examiners reward in viva.

Applications of Generative AI

CBSE expects you to know where Generative AI is being used. These are the categories with India-relevant examples:

Text generation: Google Gemini, ChatGPT — used for writing assistance, customer support automation, content creation. Bhashini, India’s national language AI platform, uses generative language models to translate content across 22 Indian languages.

Image generation: Tools like Canva AI and Adobe Firefly generate images from text prompts. The CBSE syllabus specifically names Canva as a hands-on activity for this unit.

Video generation: Animaker’s AI video tool — also named directly in your CBSE syllabus — allows you to generate animated explainer videos from text scripts. This is being used in EdTech platforms across India to create regional-language educational content at scale.

Code generation: AI models that write, debug, and explain code. This is directly relevant to your Class 12 Python practical.

Audio generation: Text-to-speech and music generation tools that create realistic voice or instrumental output from prompts.

Future of Generative AI

CBSE includes “Future of Generative AI” as a named sub-unit, so you should be able to write 2-3 sentences on this in an exam answer.

The direction Generative AI is moving in:

Multimodal models — Models that work across text, image, audio, and video simultaneously. Gemini 1.5 is already multimodal. This means future AI systems will understand a question asked in voice, refer to an image you shared, and reply in text — all in one interaction.

Personalisation at scale — Generative AI systems will adapt their outputs to individual users over time, creating personalised learning, healthcare, and creative tools.

India-specific development — The IndiaAI Mission is funding the development of foundation models trained on Indian languages and datasets — moving away from dependence on US-built systems. This will make Generative AI more accessible and relevant for Indian users in regional languages.

Ethical and Social Implications of Generative AI

This sub-unit carries real exam weight. CBSE expects you to discuss both the concerns and the context.

Misinformation and deepfakes: Generative AI can create realistic fake images, videos, and audio of real people — called deepfakes. This creates serious risks for political manipulation, fraud, and harassment. In India, deepfake videos of public figures have already been flagged by the Election Commission as an emerging threat to electoral integrity.

Copyright and ownership: When a Generative AI model creates a painting or writes a song, who owns it? The model was trained on human-created content without always obtaining permission. This is an active legal debate — courts in the US, EU, and India are still working out the answers.

Bias in generated content: LLMs trained on internet data inherit the biases present in that data — including gender bias, caste bias, and regional bias. A hiring tool powered by a biased LLM will generate biased job descriptions or reject candidates unfairly.

Academic dishonesty: Students using AI to write assignments or project reports raises questions about what it means to learn and demonstrate knowledge. CBSE is aware of this tension — which is why your practical file still requires you to document your own process and attend a viva.

Job displacement: Roles in content writing, customer support, data entry, and basic coding are being automated by Generative AI. The World Economic Forum estimates that 85 million jobs globally may be displaced by AI by 2025 — but also that 97 million new roles may emerge. India’s response through the IndiaAI Mission and CBSE’s decision to include this unit in your syllabus is a direct acknowledgement that this transition is happening now.

Examiner tip for ethics questions: Do not just list concerns. Structure your answer as: concern → example → why it matters. That three-part pattern is what earns full marks on a 4-mark ethics question.

Try It Yourself — CBSE Activity

Your CBSE syllabus specifies these hands-on activities for Unit 7. At least one of these should appear in your practical file.

Activity 1 — Google Gemini Prompting

- Go to gemini.google.com

- Type a prompt asking Gemini to explain a concept from your AI syllabus — for example: “Explain the difference between supervised and unsupervised learning in simple terms for a Class 12 student.”

- Note how the output changes when you make the prompt more specific vs more general.

- Try the same question with a different prompt structure and compare the two outputs.

- Document: your original prompt, the output, your refined prompt, the refined output, and one observation about how prompt quality affects output quality.

Activity 2 — Canva AI

- Sign up at canva.com (free account)

- Use the AI image generation feature to create a visual based on a text description

- Document the prompt you used and the image generated

Activity 3 — Animaker AI Video

- Visit animaker.com and explore the AI video generation feature

- Create a short explainer script on any AI topic and generate a video from it

- Note the AI steps involved: script → voice → animation

For Advanced Learners only (not evaluated in theory or practical exams): Write Python code to initialise the Gemini API and create a basic chatbot using google.generativeai.

Quick Revision Box

Before your viva or theory exam, confirm you can answer these without looking:

☑ Define Generative AI in one sentence (it creates new content, not just classifies existing data)

☑ Name the difference between Generative and Discriminative models (generative learns data distribution; discriminative learns category boundaries)

☑ Explain what an LLM is and give one example (Large Language Model; example: Google Gemini)

☑ Name three applications of Generative AI with examples (text → Gemini, image → Canva AI, video → Animaker)

☑ State two ethical concerns with a specific example each (deepfakes → election misinformation; bias → hiring tools)

☐ Can you explain all of the above in a 5-minute viva without hesitation?

Practice Questions

2-mark question: What is the difference between Generative AI and Discriminative AI? Give one example of each.

Model answer: Generative AI creates new data such as text, images, or audio by learning patterns from training data. Example: Google Gemini generating a paragraph from a prompt. Discriminative AI classifies existing data by learning boundaries between categories. Example: A spam filter labelling emails as spam or not spam.

Examiner note: Students who only define Generative AI without addressing Discriminative AI score 1/2. Students who give a concrete example for both score 2/2. The example is not optional — it is the second mark.

MCQ: Which of the following is an example of a Large Language Model (LLM)? (a) Orange Data Mining Tool (b) TensorFlow Playground (c) Google Gemini (d) OpenCV

Answer: (c) Google Gemini

Examiner note: Option (d) is a common wrong answer because students associate OpenCV with AI. OpenCV is a computer vision library — it does not generate text and is not an LLM.

FAQ

Q1. Is Generative AI only about text? What about images and videos?

No — Generative AI covers any modality where new content is created. Text generation (LLMs like Gemini), image generation (Canva AI, Midjourney), video generation (Animaker), audio generation, and code generation are all branches of Generative AI. For your CBSE exam, you are expected to know examples across at least text, image, and video — all three are named in the syllabus activities.

Q2. How is Generative AI different from the AI topics I studied in Class 10 and 11?

In Class 10, you studied supervised and unsupervised ML — models that classify or cluster existing data. In Class 11, you studied Neural Networks and NLP — models that recognise patterns in structured inputs. Generative AI is a step beyond both: it uses the patterns learned during training to produce entirely new outputs. Think of it as the difference between a model that identifies a cat in a photo (discriminative) and a model that draws a new cat from scratch (generative).

Q3. Will the Gemini API practical be evaluated in my Class 12 exam?

The Python code to initialise the Gemini API and create a chatbot is marked “For Advanced Learners” in the CBSE PDF — which means it is not evaluated in theory or practical exams. However, using Google Gemini to craft prompts and generate text outputs IS a standard activity you should include in your practical file documentation. Do not confuse the two.

Every tool in your Class 12 AI practical — Gemini, Canva, Animaker — is controlled through prompts. The student who understands how to write a good prompt does not just complete the activity. They understand why the output came out the way it did — and that understanding is exactly what your viva examiner is testing.