Every year, millions of rupees worth of satellite hardware rides on algorithms that must work perfectly — because there is no repair crew in orbit.

What you’ll learn:

- How ISRO uses AI across real missions — from launch to earth observation

- The four ethical questions any public-funded AI system must answer

- How to apply the CBSE stakeholder-bias-transparency-accountability framework to a positive AI case

What Is ISRO and Why Does It Matter for AI Students?

The Indian Space Research Organisation is India’s national space agency, operating under the Department of Space. Founded in 1969, ISRO has grown from building its first satellite — Aryabhata, launched in 1975 — into one of the world’s most cost-efficient space programmes.

For CBSE AI students, ISRO is significant for a specific reason: it is one of the clearest examples of AI being used at national scale, funded by public money, for public benefit. That combination — scale, public funding, and direct societal impact — makes it an ideal case for ethical analysis.

ISRO’s missions span satellite communication, weather forecasting, remote sensing, planetary exploration, and launch vehicle development. AI and machine learning are now embedded across nearly every one of these domains.

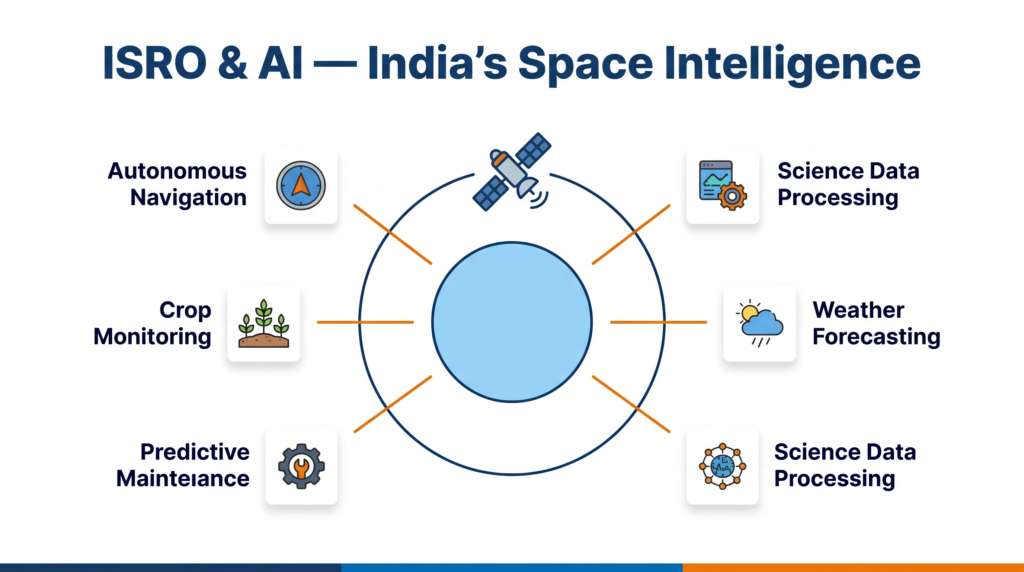

How ISRO Uses AI: Six Real Applications

1. Autonomous Navigation and Orbit Correction

Spacecraft cannot wait for a human command when a correction is needed — the communication delay between Earth and a deep-space probe can be several minutes each way. ISRO uses onboard AI systems that monitor sensor data and make micro-corrections in real time.

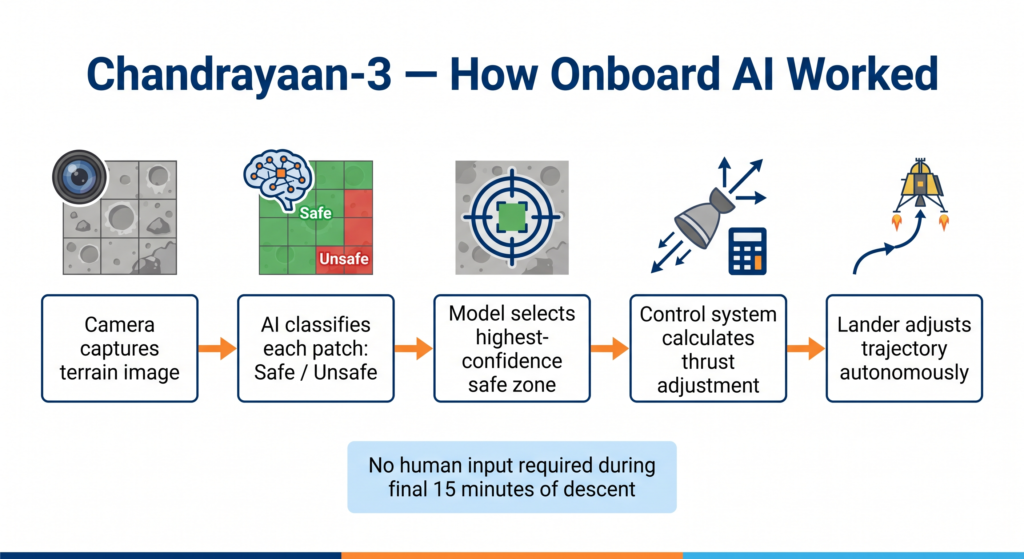

Chandrayaan-3, launched in July 2023, used an autonomous hazard detection and avoidance system during its final descent to the lunar surface. The system analysed terrain images from its onboard cameras, identified safe landing zones, and adjusted the lander’s trajectory without waiting for ground control input. This is reinforcement-learning-style decision-making applied to one of the most mission-critical moments in India’s space history.

📌 Class 9 — Note: This is an example of AI making decisions based on sensor data, similar to how a robot avoids obstacles. The AI was trained on millions of simulated landing scenarios before the actual mission.

📌 Class 10/11 — Note: The hazard detection system is a classification model at its core — it classified patches of terrain as safe or unsafe, then selected the highest-confidence safe zone.

📌 Class 12 — Note: The end-to-end pipeline combines computer vision (terrain image analysis), decision trees or neural networks (hazard classification), and a control system that converts the classification output into physical thrust commands.

2. Earth Observation and Crop Monitoring

ISRO operates the RESOURCESAT and CARTOSAT satellite series, which capture high-resolution images of the Indian subcontinent. The data volume is enormous — processing it manually is impossible.

ISRO’s National Remote Sensing Centre (NRSC) in Hyderabad uses machine learning models to automatically classify land use from satellite imagery. This data feeds into the Fasal Bima Yojana crop insurance scheme, where satellite-based crop health assessment is used to verify insurance claims without requiring physical inspection of every farm.

The model identifies crop types, estimates vegetation health using the Normalised Difference Vegetation Index (NDVI), and flags areas likely to have suffered damage from drought, flood, or pest infestation.

This is a supervised learning application: the model is trained on labelled satellite images (known crop types, known health states) and then applied to new images across millions of hectares.

3. Space Debris Detection and Collision Avoidance

There are over 27,000 tracked pieces of debris in Earth orbit. For ISRO’s operational satellites — including the NavIC navigation constellation — a collision with even a small fragment can be fatal to the mission.

ISRO uses predictive AI systems that ingest radar tracking data, calculate conjunction probabilities (the likelihood that two objects will come dangerously close), and issue alerts when a manoeuvre is needed. These systems must process thousands of object-pair combinations continuously.

This is a time series forecasting and anomaly detection application — predicting future positions from current trajectories and flagging deviations that require human attention.

4. Weather Forecasting with INSAT-3D and INSAT-3DR

The INSAT series of meteorological satellites feeds data into the India Meteorological Department (IMD). AI models trained on decades of weather data improve the accuracy of cyclone track prediction, monsoon onset forecasting, and extreme rainfall alerts.

When Cyclone Biparjoy was approaching the Gujarat coast in June 2023, AI-enhanced prediction models gave coastal communities 72-hour advance warning with track accuracy that allowed targeted evacuation — directly saving lives.

📌 Class 11/12 — Note: Cyclone track prediction uses ensemble models — multiple ML models run in parallel, and their predictions are combined (averaged or voted on) to produce a more robust forecast than any single model alone.

5. Antenna Monitoring and Predictive Maintenance

ISRO operates a network of deep space tracking stations — including the Indian Deep Space Network (IDSN) at Bylalu, near Bengaluru. The massive dish antennas require continuous monitoring. Unexpected failures during a mission-critical communication window could mean losing contact with a spacecraft permanently.

AI-based predictive maintenance systems monitor vibration sensors, motor temperature, and signal quality data to detect early signs of component degradation. By flagging parts likely to fail before they actually do, maintenance teams can schedule replacements during planned downtime rather than emergency repairs.

This is a classic predictive analytics application, using anomaly detection on time-series sensor data.

6. Processing Chandrayaan and Mangalyaan Science Data

The Chandrayaan-2 orbiter and Mars Orbiter Mission (Mangalyaan) continue to send back scientific data. Processing spectral data from surface sensors — mapping mineral composition, identifying water ice signatures, classifying geological features — uses ML classification and clustering algorithms to speed up analysis that would otherwise take geologists years to complete manually.

The CBSE Ethics Framework Applied to ISRO AI

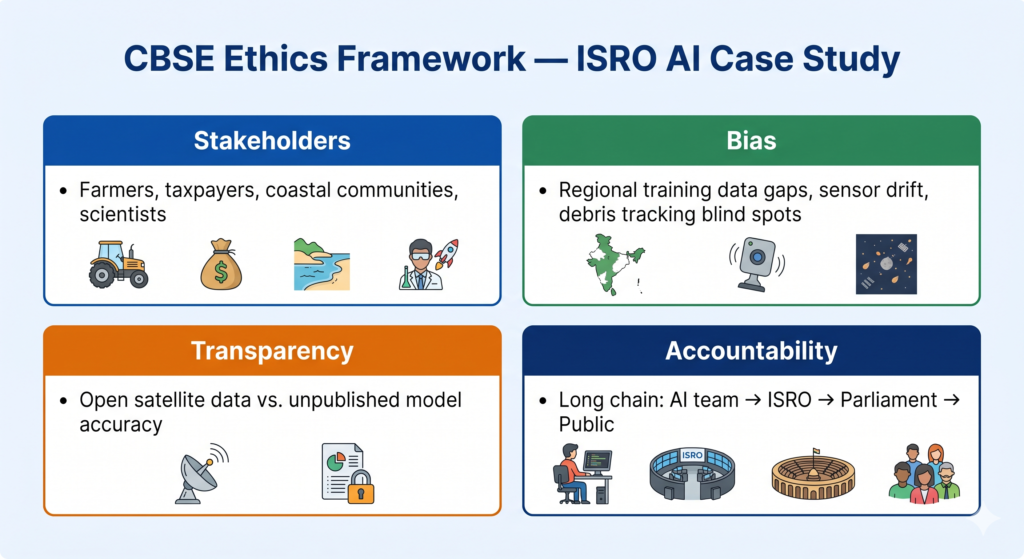

CBSE asks you to analyse AI systems using four lenses: stakeholders, bias, transparency, and accountability. Here is how each applies to ISRO’s AI use.

Stakeholders — Who Is Affected?

ISRO’s AI systems affect a wide range of groups, not just scientists and engineers:

- Farmers benefit from accurate crop monitoring that drives insurance payouts

- Coastal communities benefit from better cyclone warnings

- Taxpayers fund ISRO’s operations and have an interest in missions succeeding

- Scientists worldwide use ISRO’s open satellite data for their own research

- Future generations are affected by how well ISRO manages orbital debris today

The key stakeholder insight: space AI is not an elite technology confined to research labs. When an ISRO ML model misclassifies crop damage, a farmer may receive the wrong insurance payout. The consequences extend far beyond the satellite.

Bias — Where Can Errors Enter?

Even in space applications, data bias is a real concern:

- Training data geography: If crop classification models are trained mainly on images from wheat-growing regions of Punjab and Haryana, they may perform poorly on paddy fields in Tamil Nadu or tribal farming land in Odisha. Regional representation in training data matters.

- Sensor calibration drift: Satellite sensors degrade over time. If a model is not retrained to account for this drift, its outputs become systematically skewed — a form of data bias introduced by hardware, not human decisions.

- Debris tracking gaps: Space debris tracking data is more complete for objects in orbits frequently used by wealthy nations’ satellites. Objects in less-monitored orbits are underrepresented in training data, creating blind spots in collision avoidance systems.

📌 Class 10 callout: This is a real-world example of training data bias — one of the core topics in your Class 10 AI ethics unit.

Transparency — Is the Public Informed?

ISRO publishes mission data, satellite imagery, and some scientific datasets openly through NRSC’s Bhuvan geoportal and the Mosdac platform. This is a meaningful level of transparency for a government agency.

However, the specific AI models used — their training data, accuracy metrics, and known failure modes — are not routinely published. A farmer affected by an incorrect crop-damage assessment has no way to know whether the AI model was the source of the error or whether it has been independently validated.

This is a genuine transparency gap. Transparency does not only mean publishing results — it means explaining the process and uncertainty behind those results.

Accountability — Who Is Responsible When AI Fails?

This is the most difficult question in ISRO’s AI context.

When Chandrayaan-2’s lander Vikram crashed during its September 2019 descent, initial reports pointed to a software anomaly in the braking algorithm. The question of who was accountable — the software team, the mission director, the satellite manufacturer — illustrated how AI-adjacent failures in complex systems create genuine accountability challenges.

For a democratically elected government-funded organisation like ISRO, accountability ultimately flows back to Parliament and the public. But this accountability chain is long and indirect. There is no independent external auditor for ISRO’s AI systems, and no formal mechanism for citizens affected by a satellite-data-driven decision (like a denied crop insurance claim) to challenge the model’s output.

Positive Case, Real Questions

ISRO is used here as a positive case study — an example of AI being deployed thoughtfully, for public benefit, at national scale. This is deliberate. AI ethics is not only about what goes wrong. It is equally about asking the right questions even when things are going well.

The ethical analysis of ISRO’s AI is not an accusation. It is a practice: the habit of asking who benefits, who could be harmed, what we cannot see, and who answers when something fails. These are the same questions your CBSE curriculum trains you to ask about every AI system.

Practise asking them here, where the stakes are visible and the intentions are good — so you can ask them confidently later, when they are harder.

Quick Revision Box

| Term | One-Line Definition |

|---|---|

| NDVI (Normalised Difference Vegetation Index) | A satellite-derived measure of plant health based on how vegetation reflects infrared light |

| Autonomous navigation | AI-based control that lets a spacecraft make decisions without waiting for human input |

| Predictive maintenance | Using sensor data and ML to forecast equipment failure before it occurs |

| Stakeholder | Any person or group affected by the outcomes of an AI system |

| Training data bias | When the data used to train a model does not represent all the groups or conditions the model will encounter |

Practice Questions

2-mark question: ISRO’s Chandrayaan-3 lander used an AI system to select its landing site. Identify two stakeholders of this AI system and explain how each is affected by it.

Model answer: One stakeholder is the ISRO mission team — if the AI selects a safe landing site correctly, the mission succeeds and crores of rupees of public investment are protected. A second stakeholder is the Indian taxpayer — as the funders of ISRO, citizens have a direct interest in whether the AI system performs reliably and whether the mission achieves its scientific goals.

MCQ: ISRO’s crop monitoring ML model is trained primarily on satellite images from wheat-growing states. When the model is later applied to classify paddy crops in South India, it performs poorly. This is an example of:

(a) Overfitting (b) Training data bias (c) Model transparency failure (d) Accountability gap

Answer: (b) Training data bias — the model’s training data did not represent the full diversity of crops and regions it would encounter in deployment.

Frequently Asked Questions

Q1. Does ISRO officially use the term “artificial intelligence” in its mission documents?

Yes. ISRO and its affiliated body the NRSC have published research papers and technical reports using AI, machine learning, and deep learning terminology explicitly in the context of satellite image analysis, space debris tracking, and predictive maintenance. The Chandrayaan-3 hazard detection and avoidance system is the most publicly documented example of onboard AI in an Indian space mission.

Q2. Is ISRO’s AI use relevant to my CBSE exam, or is it just general knowledge?

It is directly exam-relevant. CBSE Class 10, 11, and 12 AI syllabi include ethics analysis, AI applications, and case-study-based questions. Examiners expect India-specific examples. An answer that uses ISRO’s crop monitoring system to illustrate training data bias, or Chandrayaan-3 to illustrate autonomous AI, is more accurate and more impressive than a generic answer.

Q3. Is AI use in space exploration ethically different from AI use in, say, social media?

In some ways, yes. ISRO’s AI is deployed in a non-commercial, public-interest context with relatively clear success metrics (did the spacecraft land safely? did the cyclone warning reach the right people?). Social media AI operates in a commercial environment with engagement-optimised objectives that can conflict with user wellbeing. However, the same four ethical questions — stakeholders, bias, transparency, accountability — apply to both. The difference is in the incentives of the organisations deploying the AI, not in the framework used to analyse it.