You have a CBSE AI exam coming up, neural networks are on the syllabus, and every explanation you find online is either too basic or so technical it reads like a research paper. This guide covers neural networks for CBSE AI exactly the way the 2025-26 syllabus requires — clear enough for Class 10 boards, deep enough for Class 12’s 8-mark questions.

What You’ll Learn

- What a neural network is and how it relates to AI, ML, and Deep Learning

- The parts and components of a neural network (Class 12 Unit 6 exact syllabus)

- How a neural network makes decisions — step by step

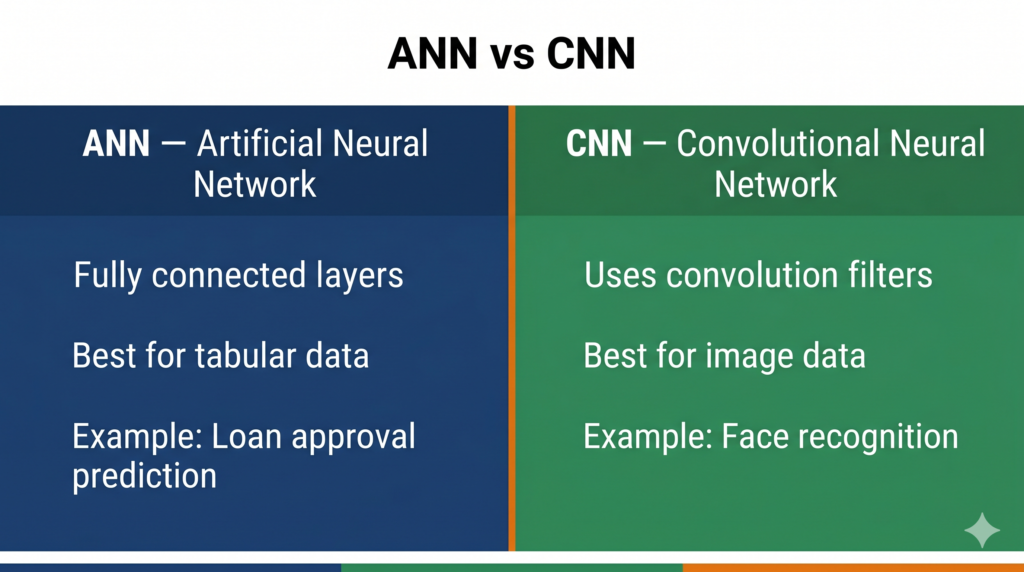

- The difference between ANN and CNN, with real exam examples

- India-relevant real-world applications you can cite in answers

- Exactly how to answer 2-mark and 4-mark questions on this topic

Section 1: The AI → ML → Deep Learning Hierarchy

Before diving into neural networks, you need to place them correctly in the bigger picture. CBSE Class 10 Unit 2 explicitly asks you to “differentiate between AI, ML, and DL.”

Artificial Intelligence (AI) is the broadest idea — making machines do tasks that normally need human intelligence.

Machine Learning (ML) is one way to achieve AI — the machine learns from data instead of being given fixed rules.

Deep Learning (DL) is a subset of ML that uses a specific structure called a neural network — inspired by the human brain. Deep Learning is what powers face recognition on your phone, voice assistants like Google Assistant, and tools like ChatGPT.

Think of it as three nested circles:

AI ⊃ Machine Learning ⊃ Deep Learning (Neural Networks)

📌 Class 10 Board Tip: If a question asks “How is Deep Learning different from Machine Learning?”, your answer must mention that Deep Learning uses neural networks modelled on the human brain, whereas general ML includes simpler methods like decision trees and regression.

Section 2: What Is a Neural Network?

A neural network is a computing system built from layers of connected units called neurons (also called nodes), loosely inspired by how neurons in the human brain pass signals to each other.

The key idea: instead of a programmer writing rules like “if pixel brightness > 200, it’s probably a face,” the neural network learns the rules from thousands of examples. You show it 10,000 images of cats and 10,000 images of dogs, and it figures out on its own what pattern separates them.

The Human Brain Analogy

Your brain has about 86 billion neurons. Each neuron connects to thousands of others. When you touch something hot, signals travel across neurons — and you pull your hand back. Neural networks work similarly: information enters, travels through connected nodes, and exits as a decision or prediction.

📌 Class 12 Board Tip: The CBSE Class 12 Unit 6 syllabus is titled “Understanding Neural Networks.” The examiner expects you to explain both the biological inspiration AND the computational structure.

Section 3: Parts and Components of a Neural Network

(Class 12 Unit 6 — exact syllabus sub-unit: “Parts of a Neural Network” and “Components of a Neural Network”)

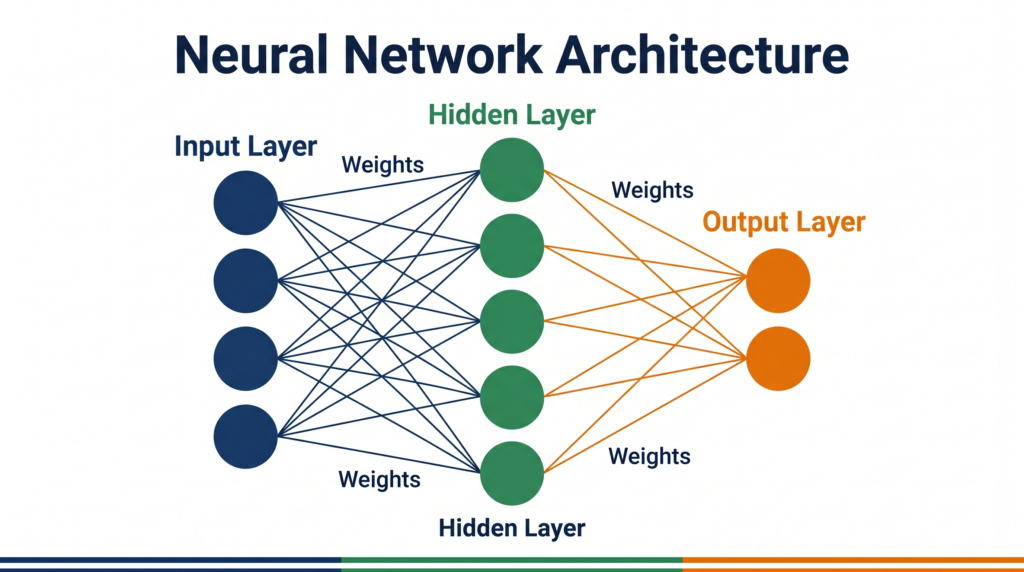

Every neural network — no matter how simple or complex — has three types of layers.

3.1 Input Layer

This is the entry point. Each node in the input layer represents one feature of your data. If you are classifying images of size 28×28 pixels, the input layer has 784 nodes (one per pixel). If you are predicting house prices from 5 features (area, rooms, location, age, floor), the input layer has 5 nodes.

The input layer does not perform any calculation — it just receives data and passes it forward.

3.2 Hidden Layers

These are the processing layers between input and output. A network can have one hidden layer or many (a network with multiple hidden layers is called a deep neural network — hence “Deep Learning”).

Each node in a hidden layer:

- Receives values from all nodes in the previous layer

- Multiplies each value by a weight (a number representing how important that connection is)

- Adds a bias (a small correction value)

- Passes the result through an activation function that decides whether the node “fires” or not

The hidden layers are where the network learns patterns — edges in images, tone in speech, keywords in text.

3.3 Output Layer

This is the final layer. It produces the network’s answer:

- For a classification task (is this a cat or dog?): the output layer has 2 nodes, each giving a probability

- For a regression task (what will tomorrow’s temperature be?): the output layer has 1 node giving a number

3.4 Key Components: Weights, Bias, Activation Function

| Component | What It Does |

|---|---|

| Weight | Controls the strength of a connection between two neurons — learned during training |

| Bias | Allows the model to shift its output even when all inputs are zero |

| Activation Function | Decides whether a neuron should “fire”; examples: ReLU, Sigmoid |

| Loss Function | Measures how wrong the network’s answer is |

| Backpropagation | The process of adjusting weights to reduce the error, working backwards through the network |

📌 Class 12 Board Tip: A 4-mark question on components typically expects: input layer, hidden layer(s), output layer, weights, and at least one mention of activation function. Use a diagram if allowed.

Section 4: How Does a Neural Network Work? (How AI Makes a Decision)

(Class 10 Unit 2 sub-unit: “How does AI make a Decision?” — exact syllabus wording)

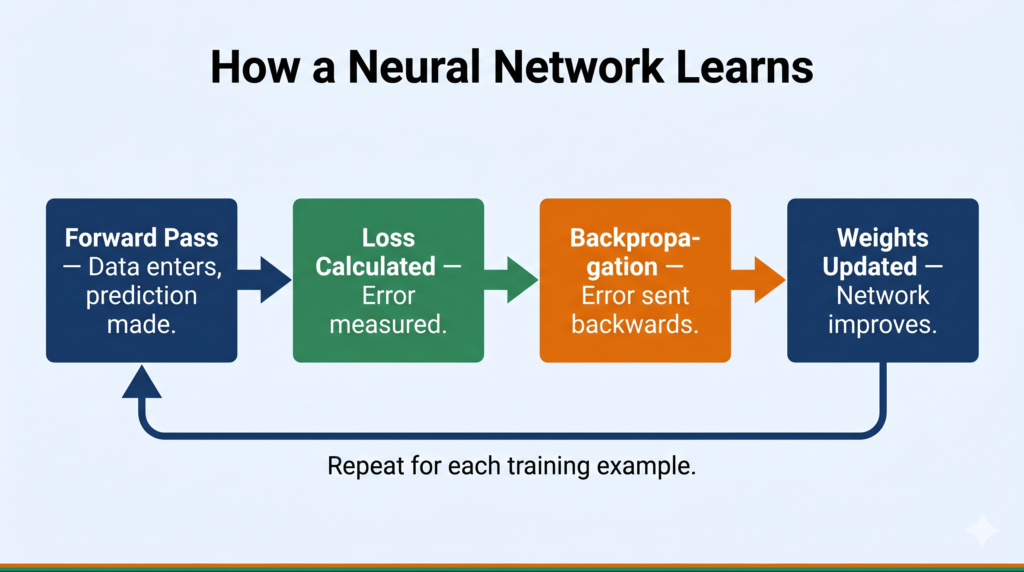

Here is what happens when a neural network processes one piece of data — say, an image of a mango:

Step 1 — Forward Pass: The image’s pixel values enter the input layer. Each pixel value is multiplied by weights, summed, and passed through an activation function at each hidden layer node. This continues layer by layer until the output layer produces a result: “mango: 92% probability, apple: 8% probability.”

Step 2 — Compare with Correct Answer: The network’s guess is compared to the correct label (“mango”). The difference is the loss (error).

Step 3 — Backpropagation: The error travels backwards through the network. Each weight is adjusted slightly — connections that contributed to the wrong answer are weakened; those that contributed to the right answer are strengthened. This is done using a technique called gradient descent.

Step 4 — Repeat: This process repeats for thousands or millions of examples (called training). After training, the weights are locked, and the network can correctly identify mangoes it has never seen before.

This is exactly how AI “makes a decision” — it is not magic, it is mathematics applied repeatedly until the patterns are learned.

Section 5: Types of Neural Networks

(Class 10 Unit 2 sub-unit: “Subcategories of Deep Learning: ANN, CNN” | Class 12 Unit 6 sub-unit: “Types of Neural Networks”)

5.1 Artificial Neural Network (ANN)

An ANN is the basic form of neural network — a network of layers where every node in one layer is connected to every node in the next. This is also called a Fully Connected Network or Dense Network.

ANNs are best for structured, tabular data: predicting whether a student will pass an exam, whether a loan application should be approved, or what tomorrow’s sales will be.

Example: ISRO uses ANN-type models to predict satellite telemetry anomalies based on sensor readings.

5.2 Convolutional Neural Network (CNN)

A CNN is a specialised neural network designed specifically for image data. Instead of every node connecting to every other, CNNs use a special operation called convolution — a small filter (kernel) slides across the image and detects features like edges, textures, and shapes.

- Convolutional Layer — detects features using filters

- Pooling Layer — reduces the size of the data while keeping important features

- Fully Connected Layer — makes the final classification decision

Example: Face unlock on your smartphone uses a CNN to match your face against stored data in milliseconds.

📌 Class 12 Board Tip: A common 4-mark question is “Differentiate between ANN and CNN.” See the comparison table in Section 6.

CNNs have three key layer types:

5.3 Other Types (Beyond ANN and CNN)

(Mentioned in CBSE Class 12 Unit 6 sub-unit: “Types of Neural Networks” — include for completeness, not exam-critical for Class 10)

- Recurrent Neural Network (RNN): Designed for sequential data like text and speech — each output feeds back as input, giving the network “memory.” Used in translation tools like Google Translate.

- LSTM (Long Short-Term Memory): An advanced RNN that handles very long sequences — used in voice assistants. (For Advanced Learners)

Section 6: Key Differences — ANN vs CNN

| Feature | ANN | CNN |

|---|---|---|

| Full name | Artificial Neural Network | Convolutional Neural Network |

| Best for | Structured/tabular data | Image and video data |

| Connection type | Fully connected (every neuron to every neuron) | Local connections via filters/kernels |

| Key operation | Weighted sum + activation | Convolution + pooling |

| Data size needed | Moderate | Large (thousands of images) |

| Example application | Sales prediction, loan approval | Face recognition, medical imaging |

| CBSE reference | Class 10 Unit 2, Class 12 Unit 6 | Class 10 Unit 2 (CV section), Class 12 Unit 6 |

Section 7: Real-World Applications

Neural networks are not theoretical concepts — they are running right now inside apps and systems you use every day.

1. Aadhaar Biometric Authentication The UIDAI’s Aadhaar system uses neural network-based models to match fingerprints and iris scans against a database of over 1.3 billion records. Every time someone authenticates via Aadhaar, a neural network is making that decision in milliseconds.

2. Zomato Food Recommendation When Zomato shows you “recommended for you,” that is a neural network analysing your past orders, time of day, location, and trending dishes to predict what you are likely to order next.

3. Google Pay Fraud Detection Every UPI transaction on Google Pay is screened by neural networks that flag unusual patterns — a large transfer at 3 AM to an unknown account, for example — in real time before the transaction completes.

4. ISRO’s Remote Sensing India’s Remote Sensing satellites generate thousands of images daily. Neural networks classify land use, detect flood-affected zones, and track crop health automatically — tasks that would take human analysts months.

Section 8: Common Mistakes Students Make

Mistake 1: Saying “neural networks are exactly like the human brain” Wrong. Neural networks are inspired by the brain, but they are vastly simpler. The human brain has 86 billion neurons with trillions of connections; a typical classroom neural network has a few dozen nodes. Examiners look for the word “inspired by” — not “same as.”

Mistake 2: Confusing ANN and CNN Some students write “CNN is just a better ANN.” Wrong. CNN uses convolution operations and is specifically designed for spatial data like images. ANN is designed for flat, tabular data. They are different architectures, not versions of the same thing.

Mistake 3: Skipping the three-layer structure When asked “what is a neural network,” many students describe neurons but forget to name the three layers explicitly: input, hidden, and output. All three must appear in a complete answer.

Mistake 4: Describing training as “the computer guesses randomly” Training is a systematic process — forward pass, loss calculation, backpropagation, weight update. It is not random. If a question asks how a network learns, use this sequence.

Section 9: Exam Strategy

Class 10 — Marks Pattern

Neural networks appear in Unit 2: Advance Concepts of Modeling in AI. Typical question types:

- 2-mark: “What is a neural network? Give one example.” → Define + one real-world example.

- 2-mark: “Name two subcategories of Deep Learning.” → ANN and CNN.

- 4-mark: “Explain how a neural network makes a decision.” → Use the 4-step process from Section 4.

Class 12 — Marks Pattern

Neural networks appear in Unit 6: Understanding Neural Networks — worth 8 marks theory (Part B, 8 theory hours + 12 practical hours).

- 2-mark: “Define neural network and name its layers.” → Three layers + definition.

- 4-mark: “Differentiate between ANN and CNN with examples.” → Use the table in Section 6 plus one example each.

- 4-mark: “Explain the components of a neural network.” → Weights, bias, activation function, loss function, backpropagation.

- Practical: TensorFlow Playground classification problem (evaluated in practicals). The Celsius-to-Fahrenheit TensorFlow model is also practical-assessed.

📌 Class 12 Important: The Python Keras neural network activity (predicting Fahrenheit from Celsius) is marked For Advanced Learners — it is not evaluated in theory or practical exams. Do not worry about memorising code for the board exam.

Section 10: Try It Yourself — CBSE Activities

Both the Class 10 and Class 12 CBSE PDFs specify these activities. Do them before your practical exam.

Activity 1: Human Neural Network — The Game (Class 10)

This is a classroom activity where students physically act as neurons — passing information (a number, a signal, a decision) through a chain of people, each applying a simple rule. It makes the concept of forward propagation tangible.

How to document it for your practical file: Note the “rule” each node (person) applied, what input entered, and what decision came out at the end.

Activity 2: TensorFlow Playground (Class 10 & Class 12)

Visit playground.tensorflow.org — free, runs in your browser, no installation needed.

What to do (5 steps):

- Select the “Circle” dataset (two classes — inner circle and outer ring)

- Set hidden layers to 2, with 4 neurons each

- Click the Play button and watch the network train

- Observe the decision boundary changing in the visualisation panel on the right

- Try increasing or decreasing the number of hidden layers — note how accuracy changes

What to note for your practical file:

- Number of epochs to reach ~90% accuracy

- What happened when you added more hidden layers

- Screenshot of the final decision boundary

Activity 3: ML for Kids — Animals and Birds (Class 12)

Visit machinelearningforkids.co.uk — the CBSE Class 12 PDF specifies this for creating a neural network to identify animals and birds.

What to do:

- Create a new project → Image recognition

- Add training examples for at least 2 categories (e.g., cats and dogs)

- Train the model and test it with new images

- Note the confidence percentage the model gives for each prediction

Section 11: Quick Revision Box

| Term | Definition |

|---|---|

| Neural Network | Computing system with layers of connected nodes that learn from data |

| Neuron / Node | Basic unit of a neural network — receives, processes, and passes information |

| Input Layer | First layer; receives raw data (pixels, numbers, text features) |

| Hidden Layer | Middle layer(s); where patterns are learned |

| Output Layer | Final layer; gives the network’s prediction or decision |

| Weight | Numerical value controlling the strength of a connection between neurons |

| Bias | Correction value that shifts the output independent of the input |

| Activation Function | Decides whether a neuron fires; examples: ReLU (hidden layers), Sigmoid (output) |

| Backpropagation | Process of adjusting weights by sending error signals backwards through the network |

| ANN | Artificial Neural Network — fully connected, best for tabular data |

| CNN | Convolutional Neural Network — uses filters, best for image data |

| Deep Learning | ML using neural networks with multiple hidden layers |

| Training | Repeated forward pass + loss calculation + backpropagation until weights stabilise |

| Epoch | One complete pass through the entire training dataset |

Section 12: Practice Questions

Question 1 (2 marks) What is a neural network? Name its three layers.

Model Answer: A neural network is a computing system made up of layers of connected nodes (neurons) that can learn patterns from data, loosely inspired by the structure of the human brain. Its three layers are: (1) Input layer — receives the raw data, (2) Hidden layer(s) — processes and learns patterns, (3) Output layer — produces the final prediction or decision.

Question 2 (4 marks) Explain how a neural network makes a decision. Your answer should describe the role of weights, loss function, and backpropagation.

Model Answer: A neural network makes a decision through a process of training and inference.

During training, data enters through the input layer. At each neuron, the input values are multiplied by weights — numbers that represent the importance of each connection. These weighted values are summed and passed through an activation function. This process, called a forward pass, produces the network’s initial prediction.

The prediction is compared to the correct answer using a loss function, which measures the error. To reduce this error, backpropagation is used — the error signal travels backwards through the network, and each weight is adjusted slightly to reduce future errors. This cycle repeats for thousands of examples until the network’s predictions become accurate.

Once trained, the network applies its learned weights to new, unseen data and produces a prediction — this is called inference, or how the AI “makes a decision.”

Question 3 (MCQ) Which type of neural network uses a convolution operation to extract features from images?

(a) ANN — Artificial Neural Network (b) RNN — Recurrent Neural Network (c) CNN — Convolutional Neural Network (d) DNN — Dense Neural Network

Answer: (c) CNN — Convolutional Neural Network

Explanation: CNNs use a convolution operation where a small filter (kernel) slides across an image to detect features like edges, corners, and textures. This makes CNNs especially effective for image recognition, face detection, and computer vision tasks.

Section 13: FAQ

Q1. Is neural network the same as deep learning? Not exactly. A neural network is the structure — layers of connected nodes. Deep Learning is the practice of using neural networks with multiple hidden layers. A network with just one hidden layer is technically a neural network but not usually called “deep.” When you hear “deep learning,” think: a neural network that is deep enough (many hidden layers) to learn very complex patterns.

Q2. Do I need to memorise the backpropagation formula for CBSE? No. The CBSE syllabus expects you to understand what backpropagation does — it adjusts weights by sending error signals backwards through the network — not the mathematical calculus behind it. Explaining the concept clearly in your own words is sufficient for full marks.

Q3. What is the difference between a parameter and a hyperparameter in a neural network? A parameter is something the network learns on its own during training — weights and biases are parameters. A hyperparameter is something you set before training begins — number of layers, number of neurons per layer, learning rate, and number of epochs are hyperparameters. You choose hyperparameters; the network finds parameters. (This distinction may appear in Class 12 questions.)

Q4. Why does adding more hidden layers sometimes make performance worse? Adding too many hidden layers without enough data or proper settings can cause two problems. First, overfitting — the network memorises training examples instead of learning patterns, and fails on new data. Second, the vanishing gradient problem — in very deep networks, the error signal becomes so small during backpropagation that early layers stop learning effectively. This is why modern networks use techniques like ReLU activation and batch normalisation. (Conceptual understanding expected in Class 12; not required for Class 10.)

Q5. Where are neural networks actually used in India’s future — what careers does this lead to? Neural networks power almost every high-growth AI career in India right now. The National Programme on AI (NPAI) under NITI Aayog and the IndiaAI Mission are actively creating demand for engineers who can build and deploy neural network models. Roles include Machine Learning Engineer, Computer Vision Specialist, NLP Engineer, and AI Research Scientist — companies hiring include Infosys, TCS, Flipkart, ISRO, and dozens of AI-first startups. The CBSE AI subject you are studying right now is specifically designed to give you a head start in this direction.

Section 14: Action Plan

Exam Practice Checklist

- [ ] Can you draw and label a neural network with 3 layers from memory?

- [ ] Can you explain the 4-step training process (forward pass → loss → backpropagation → weight update)?

- [ ] Can you define: weight, bias, activation function, epoch — in one sentence each?

- [ ] Can you write a comparison between ANN and CNN with at least 3 differences?

- [ ] Have you completed at least one TensorFlow Playground experiment and noted your observations?

- [ ] Can you name two India-relevant applications of neural networks for a real-world example answer?

- [ ] (Class 12 only) Can you state the marks weightage for Unit 6 (8 theory marks) and name all five sub-units?

Interactive Tool

TensorFlow Playground — playground.tensorflow.org

The CBSE PDF explicitly recommends this tool for both Class 10 and Class 12. It runs in any browser, requires no login, and lets you train a real neural network visually. Spend 20 minutes here and the concept of hidden layers, decision boundaries, and training epochs will click immediately. This is more useful than re-reading notes.

Project Idea: Handwritten Digit Recogniser (Class 12)

Build a neural network that can identify handwritten digits (0–9) using the MNIST dataset — 70,000 labelled images available free on Kaggle.

3-step outline:

- Load the MNIST dataset using TensorFlow/Keras and normalise the pixel values (divide by 255)

- Build a simple ANN: input layer (784 nodes) → hidden layer (128 nodes, ReLU) → output layer (10 nodes, Softmax)

- Train for 5 epochs, evaluate accuracy on test data, and display 5 correct and 5 incorrect predictions with the model’s confidence score

This project directly maps to Class 12 Unit 6 and makes an excellent capstone project base. (For Advanced Learners — not evaluated in theory or practicals.)