You have probably noticed that the delivery fee on Zomato is not the same at 8 PM on a rainy Friday as it is at 3 PM on a Tuesday. That difference is not a coincidence — it is an algorithm. And the ethics of how that algorithm works is exactly the kind of question your CBSE AI exam is designed to ask.

What You Will Learn

- How Zomato uses AI to set prices dynamically and what factors it considers

- How to apply the CBSE 4-frame ethics analysis to a commercial AI system

- What “platform transparency” means and why delivery partners are a critical stakeholder

How Zomato’s Pricing Algorithm Works

Zomato is a food delivery platform that connects customers, restaurants, and delivery partners. The prices you see on Zomato — delivery fees, platform fees, and sometimes surge pricing on certain items — are not manually set by a billing team. They are generated in real time by machine learning models that process signals including:

- Demand density: How many orders are being placed in your area right now

- Delivery partner availability: How many active delivery partners are within range

- Weather conditions: Rain, extreme heat, and other factors that reduce partner availability while increasing order volume

- Time of day and day of week: Historical patterns that predict when demand will spike

- Restaurant capacity: Whether the restaurant has a high current order queue

- Distance and estimated traffic: For delivery time and cost estimation

When demand is high and supply (available delivery partners) is low, the algorithm raises the delivery fee. This is called surge pricing or dynamic pricing — the same mechanism used by Ola and Uber for rides.

The critical question for AI ethics is not whether this pricing is technically impressive. It is whether it is fair — and to whom.

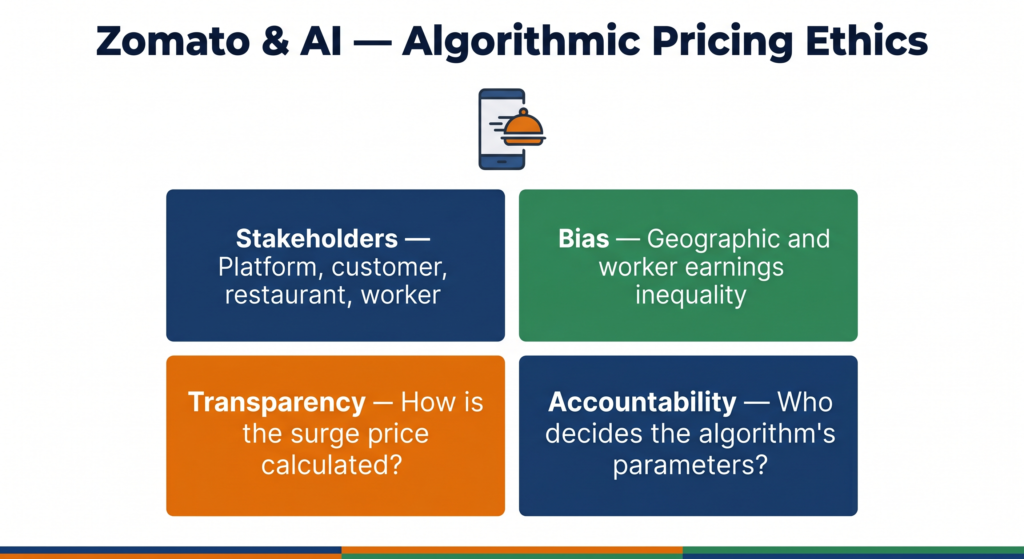

The CBSE 4-Frame Ethics Analysis

Frame 1 — Stakeholders: Who Is Affected?

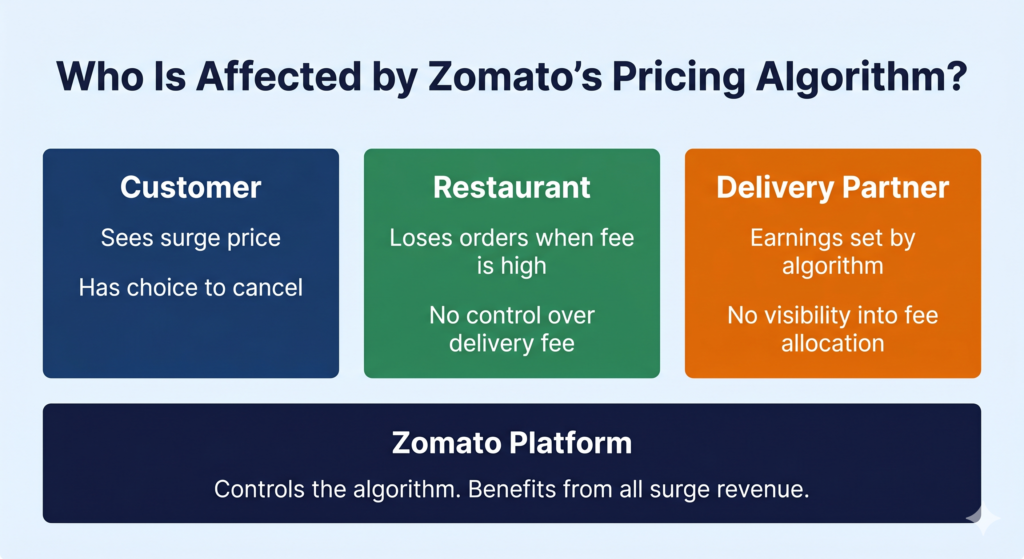

Customers are the most visible stakeholder. They pay the delivery fee and see the price change — but they have a choice. They can close the app, cook at home, or order from a nearby restaurant with lower delivery distance.

Restaurants are affected indirectly. When delivery fees are high, some customers decide not to order — which reduces a restaurant’s revenue. Restaurants have no control over Zomato’s delivery fee and no visibility into how the algorithm sets it.

Delivery partners are the most ethically complex stakeholder group. Zomato’s surge pricing is designed in part to attract more delivery partners onto the platform during high-demand periods by offering higher earnings. However, the earnings increase does not scale directly with the surge fee the customer pays. Zomato takes a percentage. The delivery partner’s income depends on how Zomato’s algorithm allocates the surplus — and that allocation is not public.

Zomato (the platform) earns revenue from the delivery fee, the restaurant commission, and in some cases a platform fee. Dynamic pricing increases revenue during peak periods. The platform benefits from surge pricing regardless of whether delivery partners’ earnings increase proportionately.

The CBSE question embedded here: When a platform designs a pricing algorithm, whose interests does it optimise for? The answer shapes every ethical conclusion that follows.

Frame 2 — Bias: Does the System Treat Everyone Equally?

Algorithmic pricing systems create two types of inequality that matter for CBSE ethics analysis.

Geographic bias. Surge pricing affects customers in dense urban areas more frequently and more severely than customers in lower-density areas — because urban areas have more volatile demand-supply gaps. But the customers paying the highest surge fees are often the ones with more disposable income (premium urban neighbourhoods). Meanwhile, customers in lower-income dense areas — where delivery partner density may also be lower — may face high surge fees with less ability to absorb the cost. The algorithm does not know or account for the income profile of the customer. It only sees demand and supply.

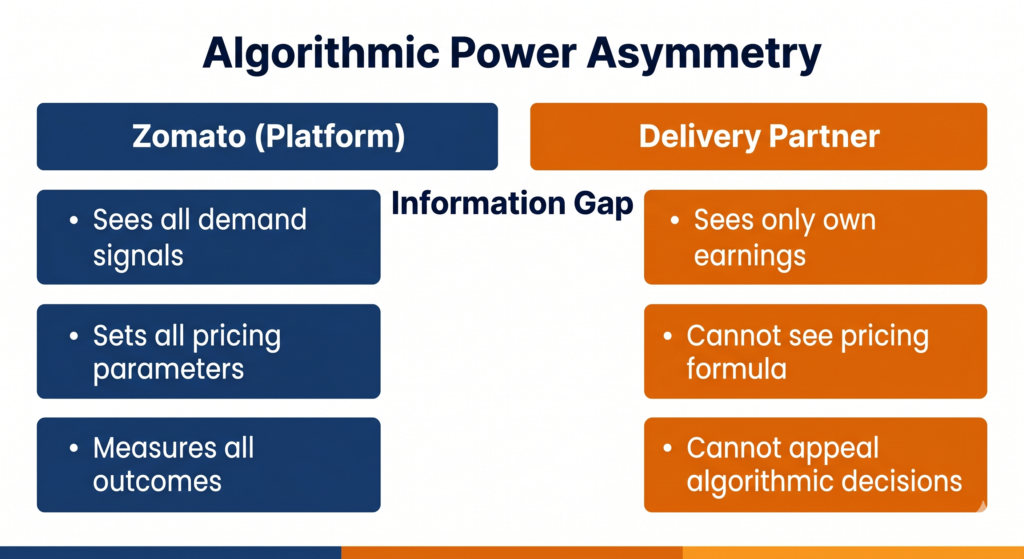

Worker earnings opacity. Delivery partners are categorised as independent contractors, not employees. They earn a per-order fee set partly by the algorithm. But the full pricing model — what percentage of the delivery fee goes to the partner, how surge multipliers are calculated, and how order allocation decisions are made — is not transparent to delivery partners. A delivery partner cannot independently verify that the algorithm is distributing surge earnings fairly.

This is a form of algorithmic power asymmetry: one party (Zomato) has complete visibility into the model’s outputs, while the parties most affected by it (delivery partners) can only observe their own individual earnings.

Frame 3 — Transparency: Can You Understand How the Price Was Set?

When you open Zomato and see a ₹89 delivery fee instead of the usual ₹25, what information do you have?

You see the price. You do not see:

- What specific factors triggered the higher fee

- Whether the surge is applied uniformly to your area or personalised to your account

- Whether a loyal user or a premium subscriber pays a different surge than a new user

- How the extra ₹64 is divided between Zomato and the delivery partner

The absence of this information is not neutral. It prevents customers from making fully informed decisions and prevents delivery partners from evaluating whether they are being paid fairly.

There is a separate and significant transparency concern related to personalised pricing. Some evidence from international food delivery platforms suggests that algorithms test different price points for different users based on their past behaviour, device type, or account history. Zomato has not confirmed doing this for delivery fees in India — but the opacity of the system means customers cannot verify whether they are seeing the same price as anyone else.

For your CBSE exam: transparency in a commercial AI system does not just mean explaining the technology. It means giving stakeholders enough information to understand the decisions that affect them.

Frame 4 — Accountability: When Something Goes Wrong, Who Is Responsible?

Consider two scenarios that raise accountability questions:

Scenario A — Pricing during a crisis. During heavy monsoon flooding in Mumbai in 2021, Zomato delivery fees surged significantly in affected areas. Some customers who desperately needed food delivery — including elderly residents and people without cooking facilities — paid very high surge prices. Was it ethical for the algorithm to treat a crisis as a demand event and raise prices? Or should the system have a hard cap on surge pricing during declared emergency situations?

Scenario B — Delivery partner earnings manipulation. A delivery partner notices that the algorithm assigns him fewer orders after he rejects two consecutive orders in a row. His earnings drop for three days. He cannot find out from Zomato whether this is intentional, a technical anomaly, or his imagination. He has no one to appeal to.

In both scenarios, the algorithm made a decision that had real consequences for a human being — and there was no mechanism to understand, challenge, or appeal that decision. This is the accountability gap.

Zomato, like most platform companies, maintains that algorithmic pricing is a neutral market mechanism — supply and demand, no human bias. The ethical counterargument is that designing the algorithm is a human decision, setting the parameters is a human decision, and choosing not to include a cap or an appeals mechanism is a human decision. Neutrality is itself a choice.

The Delivery Partner Question — A Special Ethics Focus

CBSE has specifically included the impact of AI on workers and gig economy participants in its ethics curriculum. The delivery partner situation at Zomato is the clearest Indian example of this.

Zomato’s delivery partners are classified as independent contractors. This means:

- They are not entitled to minimum wage protections

- They have no guaranteed working hours

- Their earnings are entirely determined by the algorithm’s order allocation and pricing decisions

- They have no collective bargaining rights

The algorithm determines how many orders they receive, in what sequence, at what fee. If the algorithm decides — for whatever reason — to reduce the number of orders assigned to a particular partner, that partner’s income falls. They cannot know why. They cannot appeal.

India’s NITI Aayog published a report in 2022 on the social security of gig and platform workers, acknowledging exactly this problem: workers whose livelihoods depend on algorithmic decisions have no transparency into those decisions and no recourse when those decisions are unfavourable.

The ethical design question is not whether to use algorithms for order allocation — at Zomato’s scale, manual allocation is impossible. It is whether the algorithm should be required to be explainable to the people whose incomes it controls, and whether those people should have a right to challenge its decisions.

What Could Have Been Done Differently?

Introduce a price transparency screen. Before a customer confirms an order with surge pricing active, Zomato could show a breakdown: standard fee, surge component, and the reason for the surge (e.g., “high demand in your area right now”). This does not remove surge pricing — it simply makes the decision visible. Customers can then choose whether to proceed.

Publish a surge earnings policy for delivery partners. Zomato should publicly state what percentage of the surge fee goes to the delivery partner. If the standard delivery fee earns a partner ₹35 and a 3× surge is charged, does the partner earn ₹105 or ₹35? The answer determines whether surge pricing is genuinely designed to attract partners or primarily to increase platform revenue.

Create a hard cap for emergency conditions. During natural disasters, declared public health emergencies, or curfews, the algorithm’s surge pricing should be capped at a fixed multiple. This is an ethical design choice that protects vulnerable customers during their highest need — and it does not prevent the platform from operating.

Quick Revision Box

| Term | Meaning |

|---|---|

| Dynamic pricing | Prices set in real time by an algorithm based on demand and supply signals |

| Surge pricing | A form of dynamic pricing where prices increase during high-demand periods |

| Algorithmic power asymmetry | One party has full visibility into an algorithm’s decisions while affected parties do not |

| Independent contractor | A worker classified outside employment law, whose earnings depend entirely on platform decisions |

| Price transparency | The ability of users and workers to understand how prices or fees are calculated |

Practice Questions

2-mark question: Identify two stakeholders in Zomato’s pricing algorithm who are affected differently, and explain one reason why their interests may conflict.

Model answer: Zomato (the platform) and delivery partners are two stakeholders with potentially conflicting interests. Zomato benefits from higher delivery fees during surge periods, as it increases platform revenue. Delivery partners benefit only if the surge fee is shared with them proportionately — which is not guaranteed. The platform’s interest in maximising revenue and the delivery partner’s interest in fair earnings may conflict depending on how the surplus is allocated.

MCQ: Zomato’s algorithm sets higher delivery fees during peak hours. This is an example of:

(a) Supervised learning used to classify orders as fraudulent or legitimate (b) Dynamic pricing using real-time demand and supply data (c) Natural language processing applied to customer reviews (d) Reinforcement learning used to train the delivery partner app

Answer: (b) — Dynamic pricing uses real-time signals about demand (order volume) and supply (available delivery partners) to set prices algorithmically.

Frequently Asked Questions

Q1. Is Zomato’s surge pricing illegal in India?

No — dynamic pricing in commercial services is not illegal in India. The Consumer Protection Act 2019 prohibits unfair trade practices and misleading pricing, but surge pricing that is disclosed to the customer before purchase does not fall under these provisions. However, legal permissibility and ethical justification are different questions. Something can be legal and still raise ethical concerns — particularly around transparency, fairness to workers, and pricing during emergencies. Your CBSE exam will ask you to evaluate the ethics of a system, not just its legality.

Q2. How is Zomato’s algorithmic pricing different from a shop owner raising prices on a rainy day?

The core difference is scale and opacity. A shop owner raising prices on a rainy day is visible, limited in reach, and socially accountable — customers can complain publicly, regulators can observe it, and the community can respond. Zomato’s algorithm applies to millions of transactions simultaneously, invisibly, with no human making each individual decision. No single person decided to charge you more today — a model did, based on criteria you cannot see. The scale at which algorithmic pricing operates, and the lack of visibility into how it works, creates ethical challenges that simply do not exist for a single shop owner’s pricing decision.

Q3. This case covers Class 10, 11, and 12. What should I focus on depending on my grade?

For Class 10, focus on the 4-frame analysis — stakeholders, bias, transparency, accountability — and practice identifying each frame in the Zomato case. For Class 11, extend your analysis to include the gig economy and worker rights dimension, and connect the surge pricing mechanism to AI concepts like classification and risk scoring. For Class 12, the most important addition is the ethical design question: what specific changes to the algorithm’s design — not just its output — would make the system more fair? Class 12 CBSE ethics questions expect you to propose solutions, not just identify problems.